AI Governance Risk as a Failure of Responsibility and Oversight

- 1 Legal definition Governance risk as responsibility and control failure

- 2 Misconception: model error Why accuracy and “hallucinations” are not the governance question

- 3 Ownership and oversight gaps No accountable owner, unclear approvals, fragmented monitoring

- 4 Legal consequences How governance failure becomes regulatory and \u003ca href=\u0022https://wcr.legal/services/ai-law/governance-risk/ai-risk-liability/\u0022\u003eAI risk & liability\u003c/a\u003e exposure

1. Legal Definition: AI Governance Risk as a Failure of Responsibility and Oversight

AI governance risk is not a technical flaw in a model. It is a legal failure of responsibility, control, and oversight within an organization that deploys AI systems. The legal system does not evaluate “accuracy” in isolation; it evaluates who is responsible for consequences, who had authority over deployment, and whether oversight was proportionate to foreseeable impact.

From a legal perspective, governance risk arises when an organization cannot clearly answer fundamental accountability questions: Who owns the AI system? Who approved its use case? Who monitors its outputs? Who has authority to intervene? And how are these responsibilities documented? Where these answers are fragmented, informal, or absent, the risk is structural—even if the model performs as designed.

- No identifiable accountable owner: deployment decisions occur without a legally responsible function or decision-maker.

- Unclear approval authority: AI use cases are integrated operationally without formal authorization aligned with legal exposure.

- Fragmented oversight: monitoring is distributed across teams with no unified control narrative.

- Delegation without control: AI outputs influence decisions, but escalation and intervention mechanisms are undefined.

- Insufficient documentation: the organization cannot reconstruct why a decision was made or who validated reliance on the system.

Governance risk therefore concerns the relationship between AI systems and legally relevant conduct. When AI outputs influence eligibility, pricing, risk allocation, access to services, content moderation, or operational enforcement, the organization is not merely using software. It is structuring decision-making authority. The legal question shifts from “does the model work?” to “who bears responsibility for its consequences?”

- Accuracy does not define legality: a statistically robust system can still create unlawful outcomes if oversight is defective.

- “Hallucinations” are not the core issue: even correct outputs can trigger liability if relied upon without proper governance.

- Autonomy does not remove accountability: responsibility remains with identifiable persons and entities.

- Technical control differs from legal control: having API access is not equivalent to having governance authority.

AI governance risk is the probability that an organization will incur regulatory or liability exposure because it cannot demonstrate clear responsibility, documented oversight, and defensible control over AI systems that influence legally relevant decisions.

2. Misconception: AI Governance Risk Is Not Model Error

A persistent misunderstanding in corporate environments is that AI governance risk is equivalent to technical failure. In this view, governance becomes a matter of improving accuracy, reducing hallucinations, hardening prompts, or tuning performance thresholds. This approach confuses operational robustness with legal accountability.

Governance risk exists even when a model performs exactly as intended. The legal system does not ask whether a prediction was statistically optimal. It asks whether responsibility was clearly allocated, whether oversight was proportionate to impact, and whether decision authority was exercised within defined and defensible boundaries.

Why the “model error” framing is legally insufficient

1. Law evaluates conduct, not performance metrics

- Liability can arise from reliance, even if outputs are technically consistent.

- Discriminatory impact may occur despite high aggregate accuracy.

- Regulatory breaches can result from process defects rather than statistical error.

2. Delegation changes the legal question

- When AI outputs influence approvals, pricing or enforcement, the system becomes part of decision-making conduct.

- The issue shifts from “was the model wrong?” to “who authorized reliance on it?”

- Oversight must correspond to the materiality of the consequences.

3. Accountability cannot be outsourced to code

- Autonomous output does not dissolve organizational responsibility.

- Third-party AI does not eliminate the deploying entity’s duty of care.

- Internal fragmentation does not reduce exposure; it increases it.

Treating governance risk as a subset of IT quality control leads to structural blind spots. Compliance, legal, and board-level functions may assume that once technical testing is completed, the system is “safe.” However, governance risk is not eliminated by validation metrics. It is mitigated only when responsibility chains, approval authority, escalation mechanisms, and documentation standards are clearly defined.

3. Ownership and Oversight Gaps: Where Governance Risk Forms in Practice

Governance risk typically emerges before any incident occurs. The signal is not a failed model but an organizational gap: AI is in production, decisions are being influenced, yet no function can articulate a clear ownership chain, approval authority, or oversight duty that is legally defensible.

In corporate environments, AI is often integrated through procurement, platform tooling, and distributed product teams. This produces “horizontal” adoption: multiple business units rely on the same models or embedded assistants, while accountability remains vertical and fragmented. The result is a predictable governance failure mode: responsibility exists in law, but it is not assigned operationally.

How gaps appear along the responsibility chain

pattern- 1 Deployment happens as “tooling” AI enters production via feature rollout, vendor integration, or internal automation—without legal qualification of the activity.

- 2 Outputs become decision-relevant Scores, rankings or generated text shape approvals, pricing, enforcement or communications as a default decision input.

- 3 Responsibility becomes distributed Product owns the feature, IT owns infrastructure, Security owns access, Compliance is reactive—no single accountable owner remains.

- 4 Escalation pathways are undefined When outputs create adverse outcomes, there is no documented authority to override, suspend, or re-approve the use case.

Common “ownership gap” indicators

signals- No accountable owner for the AI system as a legally consequential decision component (only technical owners exist).

- Approvals are informal (e.g., “product signed off”), with no record of legal qualification or risk acceptance.

- Monitoring is fragmented (performance metrics exist, but impact monitoring, complaints handling, and audit trails are not integrated).

- Vendor dependence is opaque (model updates, data provenance, and change notices are not governance-controlled).

- Human review is nominal (human-in-the-loop exists on paper, but reliance is effectively automated).

Why this matches the client trigger

context- The organization uses AI across workflows, products, or subsidiaries.

- Yet there is no clear legal ownership for the system’s effects: accountability is treated as “shared.”

- Oversight exists as scattered controls (security, QA, model metrics) but not as a defensible responsibility structure.

These gaps matter because they are not merely managerial defects. They directly affect legal exposure: when challenged, the organization must demonstrate who exercised decision authority, how reliance was approved, and how oversight matched the foreseeable consequences of the use case. Without an accountable ownership chain, that demonstration becomes materially difficult.

4. Legal Consequences: How Governance Failure Escalates

AI governance risk rarely manifests as an immediate regulatory action. It escalates through stages. What begins as a structural ownership gap can evolve into contractual disputes, supervisory scrutiny, or formal liability exposure once consequences materialize.

The absence of clearly defined responsibility and oversight becomes legally relevant when an AI-influenced decision produces measurable impact. At that point, authorities, counterparties, or affected individuals will not assess the model’s architecture first. They will assess the organization’s control structure.

Stage 1 — Internal Incident or Complaint

Governance risk becomes visible when outputs produce adverse outcomes: discriminatory patterns, inaccurate representations, unjustified denials, or reputational harm. The organization attempts to investigate.

- No clear owner can explain why reliance was approved.

- Decision logs are incomplete or fragmented.

- Escalation authority is ambiguous.

Stage 2 — External Challenge

Customers, counterparties, employees, or partners raise formal objections. Questions focus on attribution and oversight rather than algorithmic mechanics.

- Who authorized this deployment?

- Was human review meaningful or symbolic?

- Were foreseeable risks evaluated and documented?

Stage 3 — Regulatory or Litigation Exposure

Supervisory authorities or courts assess whether the organization exercised adequate control over decision-making systems.

- Process failures become evidence of negligence or non-compliance.

- Fragmented oversight weakens defensibility.

- Contractual disclaimers fail to override statutory duties.

Importantly, this escalation does not require a dedicated AI statute. Existing legal regimes—consumer protection, anti-discrimination law, financial regulation, product liability, corporate governance duties—already contain consequence-based standards. Once AI becomes part of decision infrastructure, those standards apply to the organization’s conduct.

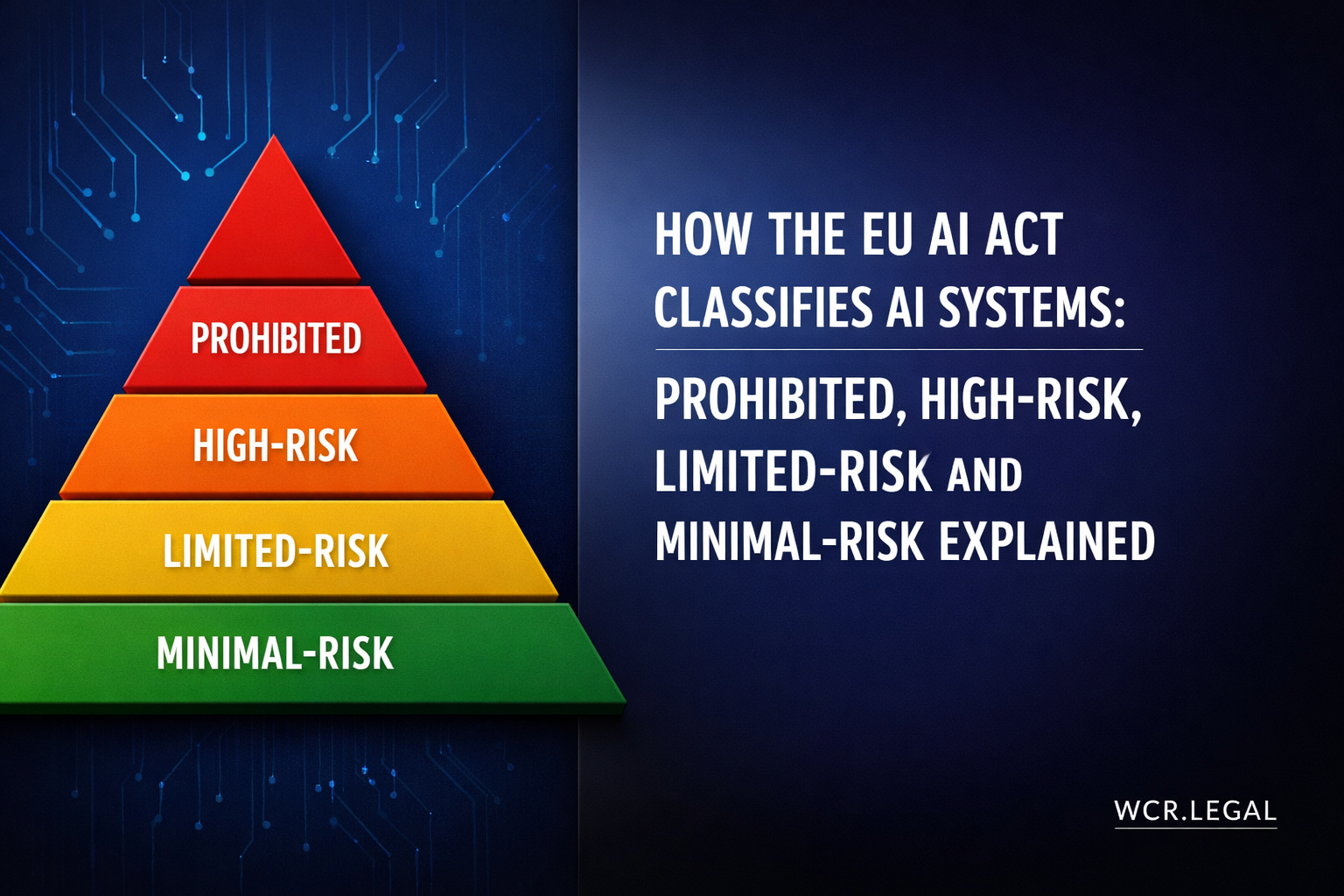

5. Regulation Without “AI Laws”: Why Governance Risk Is Already Legally Relevant

AI governance risk does not depend on the existence of a dedicated artificial intelligence statute. In many jurisdictions, exposure arises through pre-existing legal regimes that regulate consequences, decision-making processes, and organizational duties rather than specific technologies.

Once AI systems influence legally relevant conduct, established bodies of law may attach automatically. Governance risk becomes visible when an organization cannot demonstrate structured oversight under those regimes.

Consequence-based legal regimes

impact-driven- Consumer protection law: misleading outputs, unfair commercial practices, or automated pricing effects.

- Anti-discrimination frameworks: disproportionate impact in hiring, access, or eligibility decisions.

- Product and service liability: harm arising from reliance on AI-influenced outcomes.

- Corporate governance duties: board-level responsibility for risk management and internal controls.

Activity-based regulatory perimeters

sector-driven- Financial regulation: AI-driven credit scoring, risk profiling, fraud classification.

- Employment law: automated screening or performance evaluation.

- Data protection frameworks: large-scale processing, profiling, and automated decision-making.

- Platform and digital services supervision: ranking, moderation, and systemic risk controls.

These regimes share a common principle: they evaluate the conduct of the organization, not the technical architecture of the system. If AI influences regulated activity, governance must align with the legal standards applicable to that activity.

The absence of an “AI Act” in a given jurisdiction does not eliminate exposure. Governance risk materializes wherever AI intersects with regulated conduct or protected interests. In such cases, the decisive issue is whether responsibility, oversight, and escalation mechanisms are demonstrably aligned with existing legal duties.

6. Cross-Border and Group Exposure: Attribution Under Structural Complexity

AI governance risk intensifies in multi-entity, cross-border, and vendor-dependent environments. When development, hosting, deployment, and commercialization occur across different legal persons and jurisdictions, attribution becomes structurally complex.

In such contexts, governance risk is not confined to one defective decision. It becomes embedded in the corporate architecture itself. Legal systems will evaluate control, benefit, and influence to determine responsibility—regardless of how internal organizational charts distribute operational roles.

Multi-entity group structures

corporate layer- Parent entities define strategy while subsidiaries execute AI-driven operations.

- Decision authority may be informal rather than formally delegated.

- Group oversight mechanisms may not explicitly include AI as a governed risk category.

- Supervisory authorities may attribute responsibility based on effective control, not contractual form.

Cross-border deployment

jurisdiction- Data processing may occur in one jurisdiction while users are located in another.

- Different regulatory expectations may apply simultaneously.

- Conflicts of law may arise concerning automated decision-making and transparency duties.

- Oversight functions may not be harmonized across group entities.

Third-party AI providers

vendor layer- Model architecture and updates are controlled externally.

- Data provenance and training parameters may be opaque.

- Contractual allocations of risk may not align with regulatory attribution.

- Change management processes may be insufficiently governed.

In these scenarios, governance risk emerges from the mismatch between operational distribution and legal attribution. The fact that a vendor controls model training does not remove the deploying entity’s duty of care. The fact that a subsidiary operates the system does not automatically insulate the parent entity from supervisory scrutiny.

7. Systemic Conclusion: From Governance Risk to Governance Frameworks

AI governance risk is not an isolated compliance issue. It is a structural indicator that decision authority, oversight, and responsibility have not been aligned with the legal consequences of AI deployment.

Across the preceding sections, a consistent pattern emerges: governance risk does not originate in technical imperfection. It originates in organizational ambiguity. When AI systems influence legally relevant decisions without clearly defined ownership, escalation authority, and documentation standards, exposure becomes predictable.

Core structural conclusions

AI systems alter the decision architecture of organizations. They introduce probabilistic reasoning into processes that were previously manual, linear, or easily attributable. This transformation changes the legal analysis in three fundamental ways:

- Responsibility must be explicitly assigned: shared or assumed accountability is insufficient when consequences are material.

- Oversight must be proportionate to impact: high-impact use cases require demonstrable governance intensity.

- Control must be evidentiary: the organization must be able to reconstruct decisions and justify reliance.

Where these elements are absent, AI governance risk becomes systemic. It affects investor due diligence, regulatory review, cross-border expansion, licensing processes, and litigation defensibility. Governance therefore operates as legal infrastructure rather than as a supplementary compliance layer.

Next analytical step

Defining AI governance risk clarifies the exposure. The next step is structural: designing governance architectures capable of allocating responsibility, embedding oversight, and aligning AI deployment with legal standards. This transition is addressed in the following section: AI Governance Frameworks.