The EU Artificial Intelligence Act: What Non‑EU Providers and Deployers Must Know About Placing AI Systems on the EU Market

The EU Artificial Intelligence Act: What Non-EU Providers and Deployers Must Know About Placing AI Systems on the EU Market

Regulation (EU) 2024/1689 reaches far beyond Europe's borders. Any company whose AI system is placed on the EU market, put into service in the EU, or whose AI output is used within the EU is subject to the AI Act — regardless of where that company is incorporated. This guide covers extraterritorial scope, the risk classification system, prohibited practices, high-risk obligations, and the compliance timeline that every non-EU provider and deployer needs to understand.

Section 1 — What the EU AI Act Is and Who the Key Actors Are

Regulation (EU) 2024/1689 — the EU Artificial Intelligence Act — is the world's first comprehensive binding legal framework for artificial intelligence. It entered into force on 1 August 2024 and applies in full from 2 August 2026, with earlier dates for prohibited practices (February 2025) and general-purpose AI (August 2025). Unlike most EU regulations, the AI Act is designed with explicit extraterritorial reach: it applies to any AI system that is placed on the EU market or whose outputs are used within the EU, regardless of where the provider is established.

The AI Act does not regulate AI systems in the abstract — it regulates actors in the AI value chain according to their role and the risks their AI systems present. Understanding which role your organisation plays is the starting point for any compliance analysis. The obligations, liability exposure, and timelines differ significantly depending on whether you are a provider, deployer, importer, or distributor.

The AI Act defines an AI system as a machine-based system designed to operate with varying levels of autonomy and that may exhibit adaptiveness after deployment — and which, for explicit or implicit objectives, infers from the input it receives how to generate outputs such as predictions, content, recommendations, or decisions that can influence physical or virtual environments.

This definition is intentionally broad and technology-neutral. It captures machine learning models, generative AI systems, expert systems with adaptive elements, and AI components embedded in products. Software that processes inputs deterministically according to fixed rules — without inference — is generally not an AI system under the Act.

The Four Key Actors — Definitions and Obligations

The AI Act creates distinct obligations for each actor in the AI supply chain. The same organisation may occupy more than one role simultaneously — for example, a company that develops an AI model (provider) and also uses it internally to make decisions about employees (deployer) holds obligations in both capacities.

A natural or legal person, public authority, agency or other body that develops an AI system or a general-purpose AI model and places it on the market or puts it into service under their own name or trademark — whether for payment or free of charge.

Providers bear the heaviest obligations under the AI Act: conformity assessments, technical documentation, quality management systems, EU declarations of conformity, CE marking, and post-market monitoring. For non-EU providers, the obligations apply the moment a system reaches the EU market.

Highest obligation tierA natural or legal person, public authority, agency or other body that uses an AI system under its authority — except where the system is used in the course of personal, non-professional activity.

Deployers are typically enterprise customers of AI providers — businesses that integrate a third-party AI system into their operations. Deployers of high-risk AI systems have significant independent obligations: human oversight, use in accordance with instructions, fundamental rights impact assessments, and incident reporting.

Significant obligations for high-risk AIA natural or legal person established or located in the EU that places on the EU market an AI system bearing the name or trademark of a natural or legal person established outside the EU.

Importers function as a compliance gateway for non-EU-provider products entering the EU market. They must verify that the provider has conducted conformity assessments, that documentation is complete, and that the provider has appointed an EU authorized representative. If a provider fails its obligations, the importer may become liable.

EU-established; gateway roleA natural or legal person in the supply chain — other than the provider or importer — that makes an AI system available on the EU market without modifying its properties.

Distributors must verify that the AI system bears the CE marking (for high-risk systems), that it is accompanied by the required documentation, and that it has not been modified in a way that may affect its compliance. A distributor that modifies an AI system or places it on the market under its own name may be reclassified as a provider.

Supply chain compliance roleSection 2 — Extraterritorial Scope: How Non-EU Providers Are Caught

The AI Act's extraterritorial reach is one of its most commercially significant features. Article 2 establishes that the regulation applies not on the basis of where an AI system is developed or where its provider is established, but on the basis of where the system enters the EU market or where its output is used. A company headquartered in the United States, Singapore, or the United Kingdom that provides AI systems to EU customers, or whose AI outputs are used by EU-located parties, is subject to the AI Act in the same way as an EU-based company.

This approach mirrors the EU's GDPR extraterritorial model — and like GDPR, it is not merely theoretical. The AI Act establishes enforcement mechanisms specifically designed to reach non-EU entities: the EU authorized representative requirement, market surveillance authority powers, and a cross-border enforcement framework coordinated by the European AI Office.

The Four Article 2 Triggers — When Non-EU Entities Are Caught

Placing an AI system on the EU market

"Placing on the market" means making an AI system available for the first time on the EU market — whether for payment or free of charge. A non-EU provider that offers its AI system to EU businesses or consumers through a website, API, or distribution channel has placed its system on the EU market and is within the regulation's scope. Physical presence in the EU is not required.

Putting an AI system into service in the EU

"Putting into service" means supplying an AI system directly to a deployer or user for first use in the EU. Where a non-EU company installs or configures an AI system for use by an EU-located business, it has put the system into service in the EU. This covers bespoke deployments, enterprise software integrations, and SaaS platforms configured for EU use.

Output of the AI system is used in the EU

This is the broadest trigger: even if an AI system is never placed on the EU market and is operated entirely outside the EU, if its output — predictions, decisions, content, recommendations — is used within the EU, the regulation applies to the provider and deployer. A non-EU AI system generating credit decisions, medical diagnoses, or hiring recommendations that affect EU-located individuals triggers the regulation at the output-use stage.

Non-EU deployers established or located in the EU

Where a company established outside the EU has a subsidiary, branch, or representative office in the EU and uses an AI system through or from that EU presence, the deployer obligations under the AI Act apply to the EU-established entity. Multi-national groups with EU subsidiaries that use AI systems developed or procured from outside the EU cannot use the non-EU parent structure to avoid deployer compliance obligations.

Supply Chain Obligations — How Responsibility Flows

The AI Act distributes compliance obligations across the supply chain according to actor role. The following maps how obligations flow for a common scenario: a non-EU provider whose AI system is imported and distributed to EU deployers.

Section 3 — The Risk Classification System: Four Tiers and GPAI

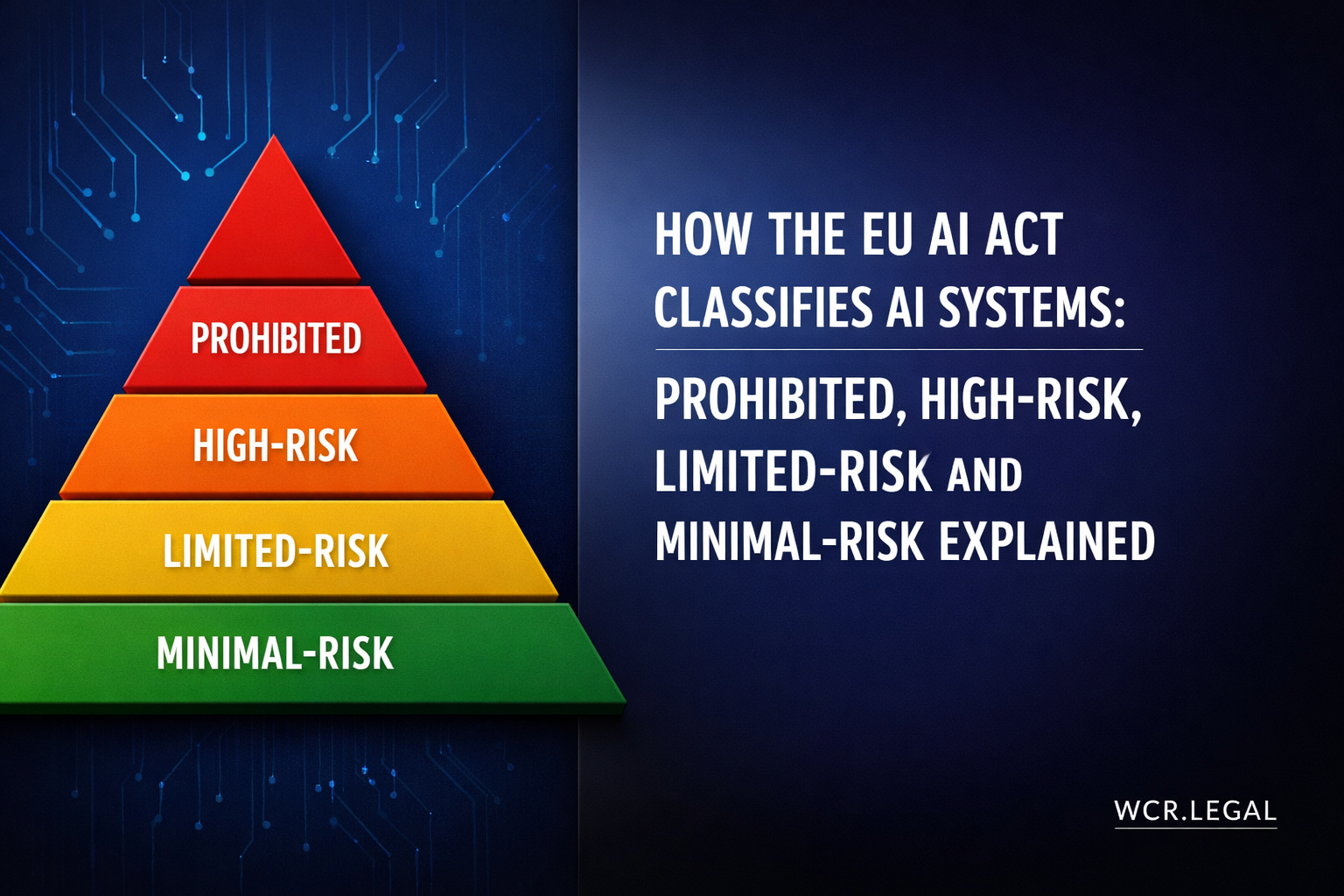

The AI Act is a risk-based regulation: obligations are calibrated to the potential harm an AI system can cause. The classification system has four tiers — prohibited practices, high-risk AI systems, limited-risk AI systems, and minimal-risk AI systems — plus a distinct track for general-purpose AI (GPAI) models that cuts across the risk hierarchy. Correctly classifying an AI system is the foundational step of any AI Act compliance programme: classification determines which obligations apply, when they apply, and what enforcement consequences attach to non-compliance.

Classification is determined by the intended purpose of the AI system — the use for which a system is specifically designed according to its provider's instructions for use, technical documentation, and marketing materials. A system used for a purpose other than its intended purpose may need to be re-assessed against the classification criteria applicable to its actual use. Deployers that repurpose AI systems beyond their intended use take on heightened compliance obligations.

The Four Risk Tiers

High-Risk AI — The Eight Annex III Categories

Any AI system whose primary intended purpose falls within one of these eight categories is classified as high-risk under the AI Act and subject to the full provider and deployer obligation framework from 2 August 2026.

Biometric identification and categorisation

Remote biometric identification systems; biometric categorisation systems attributing natural persons to specific categories

Critical infrastructure management

AI used as safety components in management and operation of critical digital infrastructure, road traffic, water, gas, heating, and electricity supply

Education and vocational training

AI determining access to educational institutions, assessing learners, evaluating learning outcomes, monitoring student behaviour

Employment and workers management

AI for recruitment, candidate selection, task allocation, performance monitoring, promotion and termination decisions, access to self-employment

Essential private and public services

AI determining access to healthcare, social benefits, creditworthiness assessment, insurance risk assessment, emergency services dispatch

Law enforcement

AI used to assess individual risk of criminal offending or reoffending, polygraphs, crime analytics, evidence reliability assessment, facial recognition by law enforcement

Migration, asylum, and border control

AI assessing risk of irregular migration, verifying authenticity of travel documents, processing asylum applications, screening at borders

Administration of justice and democratic processes

AI assisting courts in legal research or fact-finding; AI used in electoral campaigns; AI influencing elections or referendums

General-purpose AI models — large language models, multimodal foundation models, and other AI models capable of performing a wide range of tasks — are subject to a dedicated compliance framework under Chapter V of the AI Act (Articles 51–56), applicable from 2 August 2025. GPAI obligations apply at the model level, not the application level, and are directed at GPAI model providers — companies that develop and make GPAI models available to downstream providers and deployers.

Technical documentation, transparency to downstream providers, copyright policy for training data, summary of training data used. Open-source GPAI models: reduced obligations (documentation and copyright policy still required).

All base obligations plus: model evaluation against state-of-the-art benchmarks, adversarial testing, incident and serious malfunctions reporting to the European AI Office, cybersecurity measures, and energy efficiency reporting.

How to classify your AI system — a practical starting sequence

Section 4 — Prohibited AI Practices: What the AI Act Bans Outright

Article 5 of the AI Act prohibits eight categories of AI practices that the EU legislature determined present risks so severe that no conformity process, authorisation, or justification can make them acceptable. These prohibitions are absolute — they apply to providers, deployers, importers, and distributors alike, without regard to the commercial purpose or technical sophistication of the system. Critically, the prohibited practices chapter entered into force on 2 February 2025, ahead of the main high-risk obligations framework.

Subliminal, manipulative, or deceptive techniques

AI systems that deploy techniques operating below the threshold of conscious perception — subliminal techniques — or that exploit psychological weaknesses or biases to distort behaviour in a way that causes or is likely to cause significant harm. Covers systems using dark patterns, personalised persuasion at scale, or manipulative recommendation mechanics designed to bypass rational decision-making.

Exploitation of vulnerabilities of specific groups

AI systems that exploit vulnerabilities of specific groups — based on age (children, elderly), disability, or socio-economic situation — in a way that distorts their behaviour significantly and is likely to cause them harm. An AI system targeting financially distressed individuals with manipulative lending offers, or targeting children with addictive content mechanics, falls within this prohibition.

Social scoring by public authorities

AI systems used by public authorities — or on their behalf — to evaluate or classify natural persons or groups based on their social behaviour or personal characteristics, where the resulting score leads to detrimental or unfavourable treatment of those persons in unrelated social contexts. The prohibition targets generalised social credit systems modelled on the Chinese system — not sector-specific assessments like creditworthiness within financial services.

Individual criminal risk assessment

AI systems making risk assessments of natural persons to predict future criminal or reoffending behaviour based solely on profiling or assessment of personality traits and characteristics. The prohibition targets predictive policing tools that generate individual-level risk scores without grounding in objective, verifiable individual facts and circumstances directly related to the individual's past conduct.

Biometric categorisation inferring sensitive attributes

AI systems that categorise individuals based on biometric data to infer or deduce their race, ethnic origin, political opinions, trade union membership, religious or philosophical beliefs, sexual orientation, or health data. This prohibition targets systems that use facial analysis or voice analysis to infer these protected characteristics — a common feature of surveillance and profiling technologies.

Untargeted facial image scraping for recognition databases

AI systems that create or expand facial recognition databases through untargeted scraping of facial images from the internet or CCTV footage. The prohibition targets the practice of building large-scale face recognition databases by collecting images without individual knowledge or consent — a practice used by several surveillance technology companies to build commercial facial recognition services.

Emotion recognition in workplace or educational settings

AI systems used to infer emotional states of natural persons in the context of the workplace or educational institutions. Systems that analyse employee facial expressions, voice stress, or physiological signals during work interactions to assess their emotional state — for performance review, monitoring, or productivity management — are prohibited. AI-enabled wellbeing monitoring tools marketed to employers are within the scope of this prohibition if they infer emotion.

Real-time remote biometric identification in public spaces (law enforcement)

The use of real-time remote biometric identification systems in publicly accessible spaces by law enforcement is prohibited — with three narrow exceptions requiring prior judicial or independent administrative authorisation: (1) targeted search for missing persons or victims of trafficking or sexual exploitation; (2) prevention of a specific and present terrorist threat; (3) identification of persons suspected of the most serious criminal offences carrying imprisonment of at least 4 years.

Section 5 — High-Risk AI: Obligations for Providers and Deployers

For AI systems classified as high-risk under Annex II or Annex III of the AI Act, both providers and deployers face significant mandatory obligations. Provider obligations are the most extensive in the Act — they span the full system lifecycle from design to post-market monitoring. Deployer obligations, while narrower in scope, are substantial and independently enforced. Both sets of obligations apply from 2 August 2026 for most high-risk systems, with Annex I product-embedded systems following the respective product legislation timelines.

Provider Obligations — Articles 9–17 and 43–72

Providers of high-risk AI systems must satisfy a comprehensive set of pre-market and ongoing obligations. These obligations cannot be contracted out to deployers — the provider remains directly liable for compliance with the technical and procedural requirements regardless of how the system is distributed or deployed.

A continuous, iterative risk management process throughout the system's lifecycle. Providers must identify and analyse known and reasonably foreseeable risks, estimate and evaluate risks arising from intended use and foreseeable misuse, and adopt risk mitigation measures. Residual risk must be judged acceptable before market placement.

Training, validation, and testing data must meet quality criteria: datasets must be relevant, sufficiently representative, and free of errors to the extent possible. Providers must address known biases and ensure data is appropriate for the system's intended purpose. Data governance practices must be documented.

Providers must draw up and maintain comprehensive technical documentation (Annex IV format) before placing the system on the market. Documentation covers: system description and intended purpose, development methodology, training data, testing and validation results, risk management output, and monitoring procedures. Must be kept current throughout the system's lifetime.

High-risk AI systems must have automatic logging capability to record events relevant to system operation — including system activation periods, reference databases consulted, input data, and decisions. Logs must be retained for periods aligned with intended use (minimum 6 months for most systems, 3 years for law enforcement and migration systems).

Systems must be designed and developed to ensure adequate transparency so deployers can interpret outputs and use them appropriately. Providers must supply instructions for use covering: system identity and purpose, accuracy and performance metrics, human oversight requirements, technical measures available, and circumstances requiring operator intervention.

Systems must be designed and built to enable effective human oversight. This includes technical measures allowing natural persons to monitor, understand, and intervene in system operation — including the ability to override, interrupt, or disregard system output. The level of oversight must be commensurate with the risk and context of use.

High-risk AI systems must achieve appropriate levels of accuracy throughout their lifecycle and be resilient against errors, faults, and inconsistencies. They must be sufficiently cyber-secure to prevent adversarial attacks that could alter behaviour, output, or performance. Providers must specify accuracy metrics in technical documentation.

Providers must put in place a documented quality management system covering: compliance strategy; techniques for system design and development; testing and validation procedures; technical standards applied; data management procedures; risk management processes; post-market monitoring; incident reporting; and staff training. The QMS must be proportionate to the size of the provider organisation.

Deployer Obligations — Articles 26 and 27

Deployers of high-risk AI systems — businesses and public bodies using a high-risk system under their authority — carry a distinct and independently enforced set of obligations. These obligations are not discharged by provider compliance; the deployer is directly responsible for how the system is deployed and used.

Section 6 — Compliance Timeline, Penalties, and Your Action Plan

The EU AI Act does not apply all at once. It follows a graduated implementation schedule tied to four specific application dates, with the most severe restrictions — the prohibited practices — already fully in force since February 2025. Non-EU providers with EU market exposure cannot treat August 2026 as a single deadline: the compliance preparation window for high-risk AI systems involves technical, legal, and organisational work that typically requires 12–18 months to complete properly.

The Four Key Application Dates

Entry into Force

Regulation (EU) 2024/1689 entered into force on 1 August 2024. The Act is binding as a matter of EU law from this date. General provisions, definitions, and institutional framework apply — including the establishment of the European AI Office and the framework for national competent authorities. Member States must begin designating national supervisory authorities from this date.

Prohibited Practices Apply + GPAI Codes of Practice

All eight Article 5 prohibitions apply from this date — any AI system or feature falling within the prohibited categories must have been discontinued. The penalty for prohibited practices (€35M / 7% global turnover) is live. The development of codes of practice for general-purpose AI models (Article 56) also commences, with the European AI Office coordinating industry and civil society participation.

GPAI Model Obligations Apply

Chapter V of the AI Act — covering general-purpose AI models — applies from this date. Providers of GPAI models placed on the EU market must comply with transparency obligations, draw up and maintain technical documentation (Annex XI/XII format), establish copyright compliance policies, and publish summaries of training data. Providers of systemic-risk GPAI models face additional obligations including adversarial testing, incident reporting, and cybersecurity measures. The codes of practice finalized before this date create the compliance framework.

Full Application — High-Risk AI, Conformity, Registration

The main high-risk AI obligations framework applies in full. All providers of Annex III high-risk AI systems must have: completed conformity assessments; drawn up technical documentation and EU Declarations of Conformity; affixed CE marking; registered systems in the EU AI database; and established quality management and post-market monitoring systems. Deployers must have implemented human oversight measures, completed Fundamental Rights Impact Assessments where required, and established log retention procedures. National market surveillance authorities begin enforcement from this date. Non-compliance after 2 August 2026 exposes providers and deployers to the full penalty framework.

Penalties — The Three-Tier Fine Structure

The AI Act uses a tiered penalty structure linked to the severity of the violation. Fines are imposed by the national competent authority of the Member State where the infringement occurred. For non-EU providers, the authority of the Member State where the EU authorized representative is based has jurisdiction. Fines are capped at the higher of the absolute amount or the percentage of worldwide annual turnover — meaning global revenue, not only EU revenue, is used to calculate the cap.

Your 6-Step AI Act Action Plan

For non-EU providers and deployers with EU market exposure, the following roadmap provides a structured approach to achieving compliance before 2 August 2026. Given the technical and organisational investment required, organisations that have not yet started compliance work should treat this as urgent.

Need legal guidance on your EU AI Act compliance strategy?

Whether you are a non-EU provider mapping your systems for the first time, a GPAI model developer preparing for the August 2025 obligations, or an enterprise deployer building your human oversight and FRIA framework, our AI law practice advises on every stage of the EU AI Act compliance lifecycle — from risk classification and technical documentation to authorized representative appointments and national authority interactions.

Speak to our AI Law Team →