How the AI Services Market Is Changing in the Age of Free Models

How the AI Services Market Is Changing in the Age of Free Models

Powerful AI models are now available at zero cost. DeepSeek, LLaMA, Mistral, and Gemma have made capabilities that cost millions to build freely downloadable. The AI services market is restructuring fast — and the businesses that understand where value is migrating, and where legal risk remains, will be the ones that come out ahead.

-

1Market EconomicsThe Death of the AI Price Floor — What "Free" Actually Means→

-

2Competitive LandscapeHow the Market Is Restructuring — Winners, Losers, and New Entrants→

-

3Strategic ValueWhere Value Migrates — The New Sources of Competitive Advantage→

-

4Legal & RegulatoryLegal Complexity Doesn't Come with a Discount — Regulatory Dimensions of Free AI→

-

5ProcurementProcuring AI Services in a Commoditised Market — What Businesses Need to Know→

-

6StrategyStrategic Adaptation — Positioning Your Business for the New AI Market→

The Death of the AI Price Floor — What "Free" Actually Means

The AI services market was built on the assumption that access to frontier model capability required frontier-level spend. That assumption is now obsolete. A wave of open-weight and permissively licensed models has erased the price floor for base AI capability — but understanding what "free" actually costs, and what it changes in the market structure, is essential before drawing strategic conclusions.

-

2023Meta releases LLaMA 2 under commercial licence Game-changerThe first large-scale, commercially usable open-weight model from a major lab. 7B to 70B parameter variants. Gave any developer GPT-3.5-level capability at zero model cost. Within weeks, thousands of fine-tuned derivatives appeared.

-

2023Mistral AI releases Mistral 7B and Mixtral 8x7B with Apache 2.0 licenceNo use restrictions whatsoever on the base model. Mixtral achieved GPT-3.5 parity at a fraction of the compute cost, becoming the most deployed open model in enterprise applications within six months of release.

-

2024Google releases Gemma 2 (2B and 9B) and Meta releases LLaMA 3 (8B to 405B)Both families hit GPT-4 class performance at larger sizes. LLaMA 3 405B — trained on over 15 trillion tokens at an estimated cost exceeding $100 million — made freely available. The gap between "open" and "frontier" closed substantially.

-

2025DeepSeek-V3 and DeepSeek-R1 released — triggering a market inflection point InflectionDeepSeek's models — built for a fraction of conventional training cost — matched GPT-4o on benchmarks and were released as fully open-weight. The R1 reasoning model demonstrated that frontier reasoning capability no longer required frontier training budgets. Global stock markets reacted; AI service pricing across the industry fell within weeks.

-

2025API pricing for proprietary models collapses — race-to-zero dynamic beginsAnthropic, OpenAI, and Google all cut API prices by 60–80% in response to open-model competition. By mid-2025, GPT-4-class capability was available for under $1 per million tokens via API — and for zero cost via locally deployed equivalents. The base-model price floor effectively ceased to exist.

How the Market Is Restructuring — Winners, Losers, and New Entrants

Commoditisation of the base-model layer does not affect all players in the AI market equally. It is profoundly disruptive for some business models and powerfully enabling for others. Understanding which side of the disruption your business sits on — and why — is the starting point for strategic adaptation.

Where Value Migrates — The New Sources of Competitive Advantage

When a capability becomes free, value does not disappear — it moves. Understanding exactly where value is migrating in the AI services stack determines which investments generate durable competitive advantage and which will be competed away as quickly as they are built. Five distinct value pools are now clearly visible as the base-model layer commoditises.

Legal Complexity Doesn't Come with a Discount — Regulatory Dimensions of Free AI

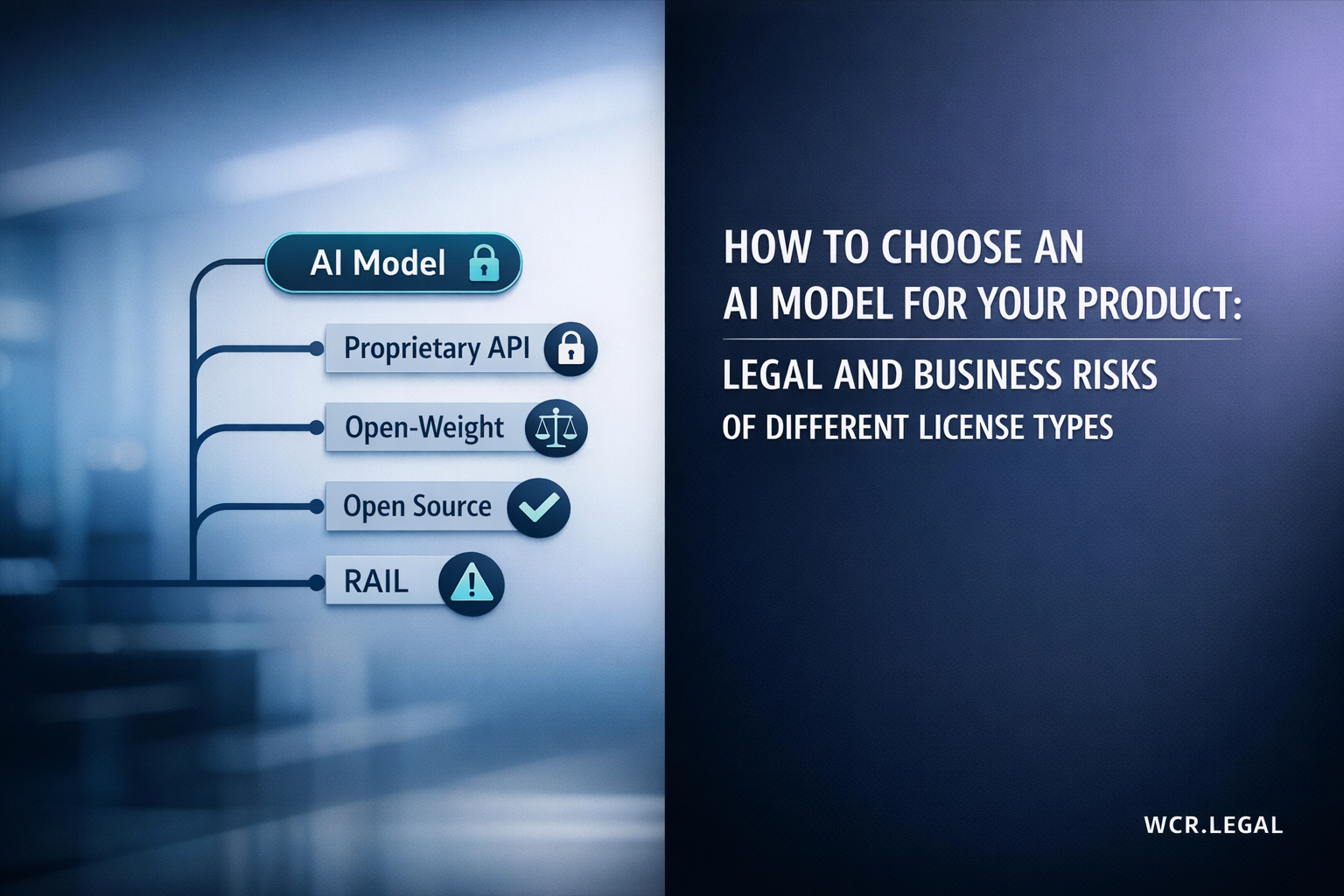

The widespread assumption that free or open-weight AI is legally simpler than proprietary AI is wrong. In many respects, the opposite is true. When you deploy an open-weight model, you assume direct compliance responsibility that would otherwise sit with the API provider. The legal and regulatory obligations that apply to AI services — licence compliance, export control, EU AI Act deployer duties, data protection, and AI liability — are unchanged by the price tag on the model. Here is what businesses need to understand.

| Obligation Area | What It Requires for Open-Weight Deployers |

|---|---|

| Provider vs. Deployer Status | If you deploy an open-weight model "under your own name or trademark" or make material modifications, you may be reclassified as a provider under the EU AI Act — with much more extensive obligations than a deployer. This affects any business that fine-tunes, modifies, or brands an open-weight model as their own AI product. High Impact |

| High-Risk System Classification | The EU AI Act's risk classification applies to the use case — not the model source. A free open-weight model deployed in an employment screening, credit scoring, or healthcare diagnostic application is a high-risk AI system with full compliance obligations regardless of whether the model cost $0 or $1 million to license. Applies Equally |

| GPAI Provider Obligations | The GPAI obligations under Articles 51–55 apply to the GPAI model provider (typically Meta, Google, Mistral for their models) — but deployers who build on GPAI models must ensure they receive adequate information about model limitations and known failure modes to discharge their own deployer obligations. Absent this, the deployer assumes greater liability for harmful outputs. Documentation Key |

| Transparency to Users | Deployers must inform users they are interacting with AI, and for many high-risk systems must provide information about system capabilities and limitations. This applies regardless of whether the underlying model is open-weight or proprietary. The transparency obligation cannot be outsourced to the model provider — the deployer owns it. Direct Obligation |

| Fines for Non-Compliance | Deployers of high-risk AI systems that fail to meet conformity obligations face fines of up to €15 million or 3% of global annual turnover. Deployers of prohibited AI applications face fines up to €35 million or 7% of global turnover. These fines apply equally to deployments built on free models and paid models. Up to 7% Turnover |

Procuring AI Services in a Commoditised Market — What Businesses Need to Know

The commoditisation of base AI capability has transformed the procurement decision for businesses buying AI services. The question is no longer simply "which model is best?" — it is a more complex build-versus-buy-versus-integrate analysis, layered with due diligence requirements, vendor-lock-in considerations, and contractual protections that are specific to the new market structure. Here is how to approach AI services procurement intelligently in 2025.

Strategic Adaptation — Positioning Your Business for the New AI Market

The AI services market is not going back. The commoditisation of base-model capability is permanent, the market restructuring is ongoing, and the regulatory obligations are increasing in parallel. The businesses that thrive in this environment are not those with the best model — they are those that respond to the new market reality most intelligently. Here are five strategic moves and the sector-specific context that makes them actionable.

| Sector | Primary Opportunity | Primary Risk | Strategic Priority |

|---|---|---|---|

| ⚖️ Legal Services | Fine-tune on case law & precedents AI-assisted drafting, research, due diligence at fraction of current tool cost | Hallucinated citations Malpractice exposure Bar association scrutiny of AI use | Verification protocols + AI governance framework + explicit client disclosure policy |

| 🏥 Healthcare | Clinical data fine-tuning Sovereign infrastructure for patient data processing; diagnostic support with on-premise models | High-risk EU AI Act classification Liability for AI diagnostic errors | DPIA + conformity assessment + human oversight architecture before deployment |

| 💰 Financial Services | Transaction data advantage Risk model fine-tuning; private deployment for data-sensitive trading & compliance workflows | FCA/SEC AI governance requirements Credit AI Act classification | Explainability infrastructure + regulator engagement + model documentation |

| 🏛️ Public Sector | Data sovereignty now achievable EU public bodies can deploy open models on sovereign infrastructure for the first time | EU AI Act high-risk for public services Procurement rule compliance | Sovereign deployment architecture + cross-border data compliance + conformity assessment |

| 🛒 E-Commerce & Retail | Purchase data moat Personalisation and recommendation at near-zero model cost; customer service automation | Consumer protection AI rules GDPR profiling restrictions | Consent architecture + GDPR Article 22 compliance for automated decisions + user transparency |

| 🏗️ Manufacturing | Operational data advantage Quality control, predictive maintenance, supply chain optimisation with proprietary sensor data | Safety-critical AI classification Product liability for AI-influenced decisions | Safety validation + EU AI Act safety component analysis + product liability review |

-

🤖The base AI model layer is commoditised. Frontier-class AI capability is now available for free or near-free. This is permanent, not a temporary pricing anomaly — plan your AI strategy accordingly.

-

📈Value has not disappeared — it has migrated up the stack to proprietary data, domain expertise, compliance infrastructure, and workflow integration. These are where durable competitive advantage now lives.

-

⚖️Free models are not legally simpler. Open-weight deployment often increases your regulatory burden by removing the shared compliance relationship with a provider. Legal complexity scales with deployment, not with model cost.

-

🌍Cross-border AI complexity is increasing as jurisdictions diverge on AI rules. Export controls, data residency requirements, and jurisdiction-specific regulatory obligations all apply equally to free and paid AI deployments.

-

🏆The businesses that win in the age of free models are not those with the cheapest AI — they are those that combine accessible AI capability with the data assets, domain expertise, governance frameworks, and legal structuring that competitors cannot easily replicate.

Navigate the New AI Market with Legal and Regulatory Confidence

Whether you are deploying open-weight models for the first time, restructuring your AI vendor relationships, managing cross-border AI compliance, or building governance frameworks for regulated-sector AI, our team provides the legal expertise that the new AI market demands.