How to Choose an AI Model for Your Product: Legal and Business Risks of Different License Types

How to Choose an AI Model for Your Product: Legal and Business Risks of Different Licence Types

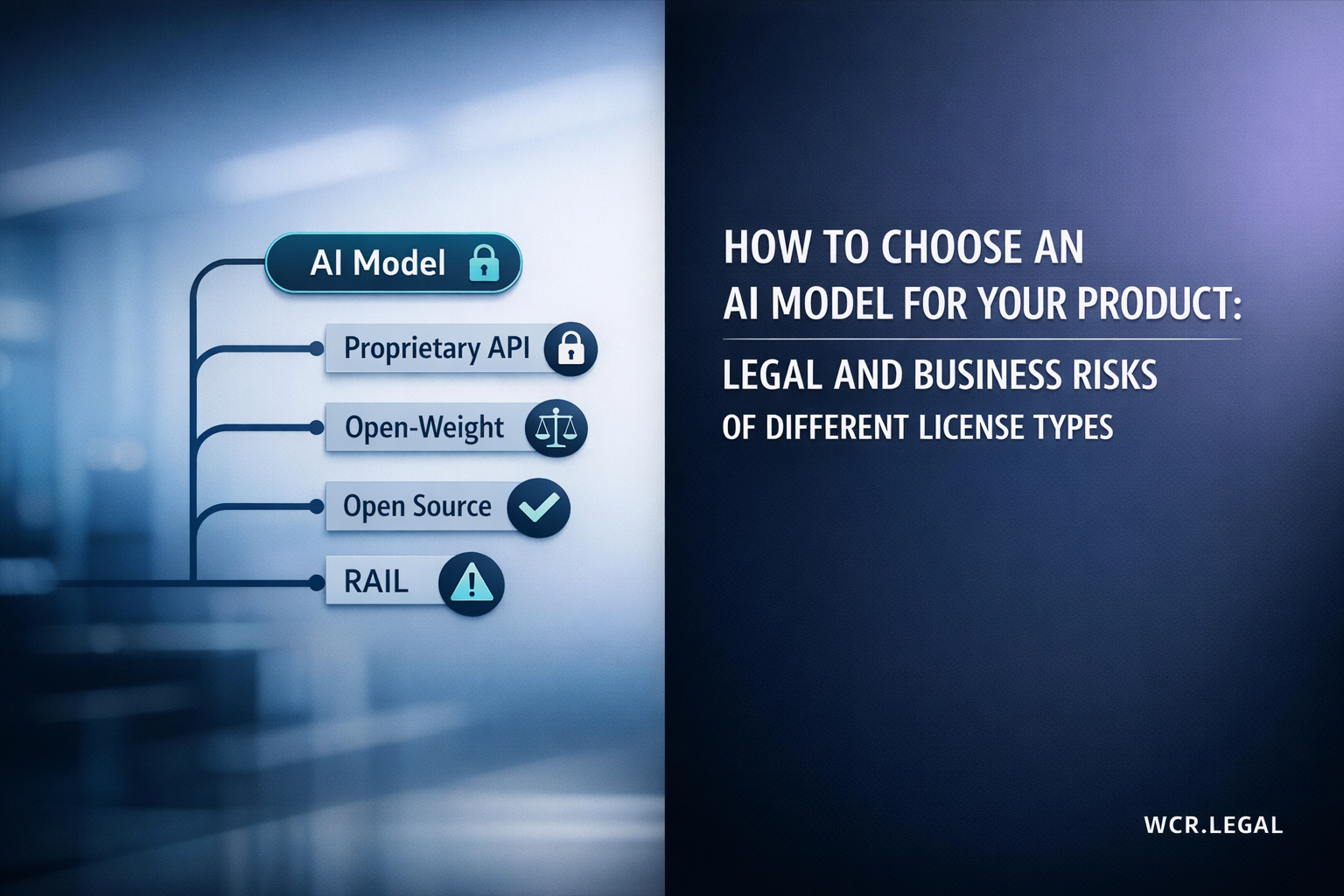

Before you integrate an AI model into your product, you are making a legal decision — not just a technical one. The licence type determines what you can build, who owns the outputs, and who is liable when things go wrong.

Why Model Licence Type Is a Legal Decision

Most product teams evaluate AI models on performance benchmarks, cost per token, and latency. The licence is treated as a legal formality — something to scan quickly before moving on. That is the wrong approach, and it creates real exposure.

When you integrate an AI model into your product — whether through an API, a self-hosted deployment, or a fine-tuned derivative — you are entering a legal relationship that governs your product's IP, your liability exposure, and your regulatory compliance obligations. The licence is the contract. Understanding it before you build is not optional.

The regulatory dimension is becoming increasingly concrete. Under the EU AI Act's risk-based framework, products built on high-risk AI systems — regardless of whether the underlying model is proprietary or open-weight — carry specific obligations around transparency, human oversight, and technical documentation. The licence type you choose directly affects how you can satisfy those obligations. See the guide to AI risk and liability frameworks for the interaction between model licensing and product liability under the Act.

Cross-border products face an additional layer. A model licensed under US terms, deployed in an EU product, accessed by users in multiple jurisdictions creates a three-way regulatory intersection that is easy to underestimate at the build stage. The cross-border AI compliance guide covers the interaction between licence jurisdiction and applicable law in more detail.

Open-Weight Models with Usage Restrictions

The term "open" applied to AI models covers a wide spectrum. Understanding exactly what is open — and what is not — is essential before you build a product on an open-weight model.

An open-weight model is one where the trained model weights — the numerical parameters that encode the model's capabilities — are made available for download and local deployment. This is distinct from true open-source, which requires the training code, training data, and model architecture to also be available under an open licence.

Open-weight availability gives you something significant: you can deploy the model on your own infrastructure, customise it through fine-tuning, and avoid per-token API costs. You also have more control over data residency, latency, and regulatory compliance — all of which matter for GDPR and EU AI Act compliance documentation.

What open-weight does not give you is freedom from the licence. Llama 3, Mistral, Falcon, and most other prominent open-weight models are released under custom licences — not Apache 2.0 or MIT. Those licences contain material restrictions on commercial use, redistribution, and permitted applications.

The most commercially significant restriction in current open-weight licences is the monthly active user (MAU) threshold. Meta's Llama 3 licence, for example, requires a separate commercial licence agreement from Meta if your product exceeds 700 million monthly active users. Below that threshold, commercial use is permitted — but the licence contains other meaningful restrictions.

Use-case prohibitions are the second major restriction category. Most open-weight licences prohibit use for specific application types — weapons development, surveillance systems, voter suppression, and similar high-risk categories. These prohibitions mirror the EU AI Act's prohibited use categories, but are not always coextensive with them. You need to check both the licence and the Act independently.

Attribution and branding requirements are a third area where teams are frequently unprepared. Some open-weight licences require attribution notices in your product's documentation or user-facing content. Failing to comply voids your licence and creates IP infringement exposure.

| Model | Commercial use | Fine-tuning | MAU cap |

|---|---|---|---|

| Llama 3 (Meta) | Conditional | Yes | 700M |

| Mistral 7B | Apache 2.0 | Yes | None |

| Gemma 2 (Google) | Conditional | Yes | Prohibited uses list |

| Phi-3 (Microsoft) | MIT | Yes | None |

| Falcon 180B (TII) | Custom licence | Conditional | Revenue threshold |

Truly Open-Source Models: Apache 2.0, MIT, and BSD

A small but growing category of AI models are released under standard open-source licences — not custom model licences, not conditional commercial permissions. Genuine permissive open-source gives you the most freedom, but freedom is not the same as risk-free. The cross-border AI compliance framework still applies regardless of your licence type.

Apache 2.0 is the closest thing to an industry standard for permissive commercial open-source AI. It permits unrestricted commercial use, modification, redistribution, and sublicensing — with two conditions: attribution notices must be preserved in distributed copies, and any patent licences from contributors are granted under the same terms.

Apache 2.0 also includes an explicit patent licence grant from contributors, which is meaningfully stronger IP protection than MIT — particularly relevant for AI models where training procedures or architectures may be subject to patent claims.

- ✓Commercial use: Unrestricted, including SaaS products and derivative models

- ✓Fine-tuning and modification: Permitted — modified versions can be relicensed under different terms

- ✓Patent grant: Explicit patent licence from contributors — stronger protection than MIT

- !Attribution required: Must preserve NOTICE file and copyright statements in distributed products

MIT is the most permissive mainstream open-source licence. It permits doing almost anything with the software — use, copy, modify, merge, publish, distribute, sublicense, and sell — subject to a single condition: the copyright notice and permission notice must be included in all copies or substantial portions of the software.

MIT has one notable weakness compared to Apache 2.0 for AI: it does not include an explicit patent licence grant. If a contributor holds patents covering training techniques or model architecture elements, MIT does not automatically grant you a licence to those patents. In practice this risk is low but non-zero, particularly for models with novel architectural features.

- ✓Commercial use: Unrestricted — no revenue caps, no MAU thresholds

- ✓Derivative works: Can be relicensed under any terms, including proprietary

- !No patent grant: Patent rights not explicitly licensed — check model origin for patent exposure

- !No warranty: Model provided "as is" — no representation on output accuracy or fitness for purpose

A permissive open-source licence resolves the commercial use question. It does not resolve your product's broader legal obligations — and this is where many teams make a critical planning error.

The EU AI Act's requirements apply based on what your product does — not which licence your underlying model uses. If your product is a high-risk AI system under Article 6, you need a conformity assessment, technical documentation, and a quality management system whether your model is GPT-4 or Apache 2.0 open-source. The licence exempts nothing.

- !Training data liability: Open-source licences do not warrant that training data was lawfully obtained. Copyright infringement claims from training data exposure remain a residual risk regardless of your product's downstream licence.

- !Output liability: No open-source licence disclaims your product's liability for harmful, defamatory, or inaccurate model outputs. That exposure is yours entirely.

- !EU AI Act compliance: Risk classification under the Act follows the use case — a truly open-source model used for employment screening is still a high-risk AI system requiring full compliance documentation.

RAIL and Responsible AI Licences: The New Compliance Layer

Responsible AI Licences (RAIL) represent a new category of licence that sits between traditional open-source and proprietary terms. They are proliferating rapidly — and they introduce a type of downstream use restriction that most legal teams are not yet equipped to evaluate.

Responsible AI Licences were developed by BigScience (initially for the BLOOM model) and subsequently adopted by a growing number of AI developers. They are open-weight licences — the weights are available — but they add a "use restrictions" addendum that explicitly prohibits specific application categories.

The key structural innovation is that RAIL restrictions are designed to propagate downstream. When you fine-tune a RAIL-licensed model and distribute the derivative, your distribution must carry the same use restrictions. This "viral" restriction mechanism is conceptually similar to copyleft in software licensing — but applied to permitted use categories rather than to source code availability.

RAIL licences typically include a use restrictions schedule derived from the Responsible AI Licences framework. The specific list varies by version and model, but common prohibited uses include:

- Law enforcement, criminal justice risk assessment, or recidivism prediction

- Autonomous lethal weapons systems or military surveillance

- Generating or distributing disinformation for political manipulation

- Targeted advertising based on protected characteristics (race, religion, sexual orientation)

- Tracking or surveillance of individuals without their informed consent

- Medical diagnosis or treatment without qualified human oversight

- Generating child sexual abuse material (CSAM) — absolute prohibition

The overlap with the EU AI Act's prohibited practices list (Article 5) is substantial — but not complete. RAIL restrictions may be narrower or broader than the Act in specific categories. You must check both independently for each intended use case.

RAIL licences are enforceable contracts. Breach of the use restrictions schedule constitutes a licence violation — which means your right to use, distribute, or build on the model is automatically terminated without notice in most RAIL variants. Termination does not require court action: the licence simply ceases to apply, and continued use becomes IP infringement.

The practical enforcement question is how this is monitored for downstream users. Currently, RAIL enforcement is primarily complaint-driven — a user or competitor reports a violation to the model developer, who can then pursue termination. As AI governance frameworks mature and as regulatory bodies develop monitoring capabilities, this may become more systematic.

- Licence automatically terminates on violation — no grace period in most RAIL variants

- Downstream obligations bind your customers if you distribute RAIL-licensed models or derivatives

- You may need to implement user-facing terms that carry RAIL restrictions through to your end users

Building a product on a RAIL-licensed model creates two distinct compliance obligations. First, you must ensure your own product does not fall within any of the prohibited use categories in the licence. This requires a careful mapping of your product's actual functionality — not just its intended use — against the restrictions schedule.

Second, if you distribute the model or a fine-tuned derivative to third parties — as part of an API, a white-label product, or an open release — you must pass the RAIL restrictions through to those parties. This means your ToS or licence agreement with downstream users must prohibit the same use categories. Failing to include this pass-through makes you liable for downstream violations as a distributor.

- Map product functionality against restrictions — not just marketing intent

- If distributing derivatives: include RAIL pass-through obligations in your downstream ToS

- Document the mapping as part of your EU AI Act technical file if applicable

- Review restrictions on every major licence version update — RAIL versions evolve

Decision Framework: Matching Licence to Your Product

The right licence type for your product follows from five questions about your business model, use case, market, and risk tolerance. Answer them honestly and the decision narrows quickly. See also cross-border AI compliance for the additional layer when your product operates across multiple jurisdictions.

| Licence type | Commercial use | Output ownership | Vendor lock-in | Use restrictions | IP indemnification |

|---|---|---|---|---|---|

| Proprietary API | ✓ Yes | Conditional | High | ToS dependent | Enterprise only |

| Open-weight (custom licence) | Conditional | ✓ Yes | None | Model-specific | None |

| Apache 2.0 open-source | ✓ Yes | ✓ Yes | None | None | Patent grant only |

| MIT open-source | ✓ Yes | ✓ Yes | None | None | None |

| RAIL / OpenRAIL | Conditional | ✓ Yes | None | Use list applies | None |

This is the threshold question. Before any other evaluation, confirm that your product's actual functionality — not just its intended purpose — is permitted under the licence you are considering. RAIL restrictions, custom open-weight licences, and proprietary ToS all contain prohibited use lists. Check each one independently against your product specification.

Data residency requirements, GDPR compliance, latency requirements, and cost structure may all require self-hosted deployment rather than API access. Self-hosting requires access to model weights — which eliminates proprietary API models entirely and requires either open-weight or open-source options.

Proprietary API licences can change unilaterally. Custom open-weight licences (like Llama) can be updated by the model developer. Only permissive open-source licences under stable frameworks (Apache 2.0, MIT) offer genuine licence stability — once a version is released under these terms, that version's licence cannot be retroactively changed.

If your product includes a model as a component — for example, an embedded AI feature, a white-label API, or an open-source release — you are distributing a derivative. This triggers pass-through obligations under RAIL licences and attribution requirements under Apache 2.0 and MIT. Proprietary API models generally prohibit redistribution of the underlying model weights entirely.

If your product falls under the EU AI Act as a high-risk AI system, you need technical documentation that may be easier to produce for self-hosted models where you have direct access to model specifications, training documentation, and evaluation results. For proprietary API models, you are dependent on provider documentation — which varies significantly in completeness. See AI risk and liability frameworks for the full documentation requirements.

WCR Legal advises AI product teams on model licence risk, EU AI Act compliance, IP ownership structures, and cross-border regulatory obligations. We review your specific model selection and use case — not generic advice.