Jurisdiction, IP, and Liability: The Three Decisions That Shape AI Company Structuring

Jurisdiction, IP, and liability — the three decisions that shape everything

AI companies scaling beyond a single market face a structuring problem that is materially harder than typical SaaS. This guide covers where to hold your model IP, how regulatory exposure follows your users, how to architect liability, and how to match structure to stage. Relevant if you are building for AI structuring & investments decisions in 2026.

Why AI companies have a harder structuring problem

The standard holding structure playbook — IP holdco in a low-tax jurisdiction, operating company in the market — works reasonably well for SaaS. For AI companies, it breaks down at three points. Understanding where it breaks is the starting point for getting the structure right. See our AI governance & risk practice for context on the regulatory environment this sits within.

With standard SaaS, software IP can be assigned to a holding company in a tax-efficient jurisdiction relatively cleanly. The IP is code; ownership is documented by assignment agreement; transfer is administratively straightforward.

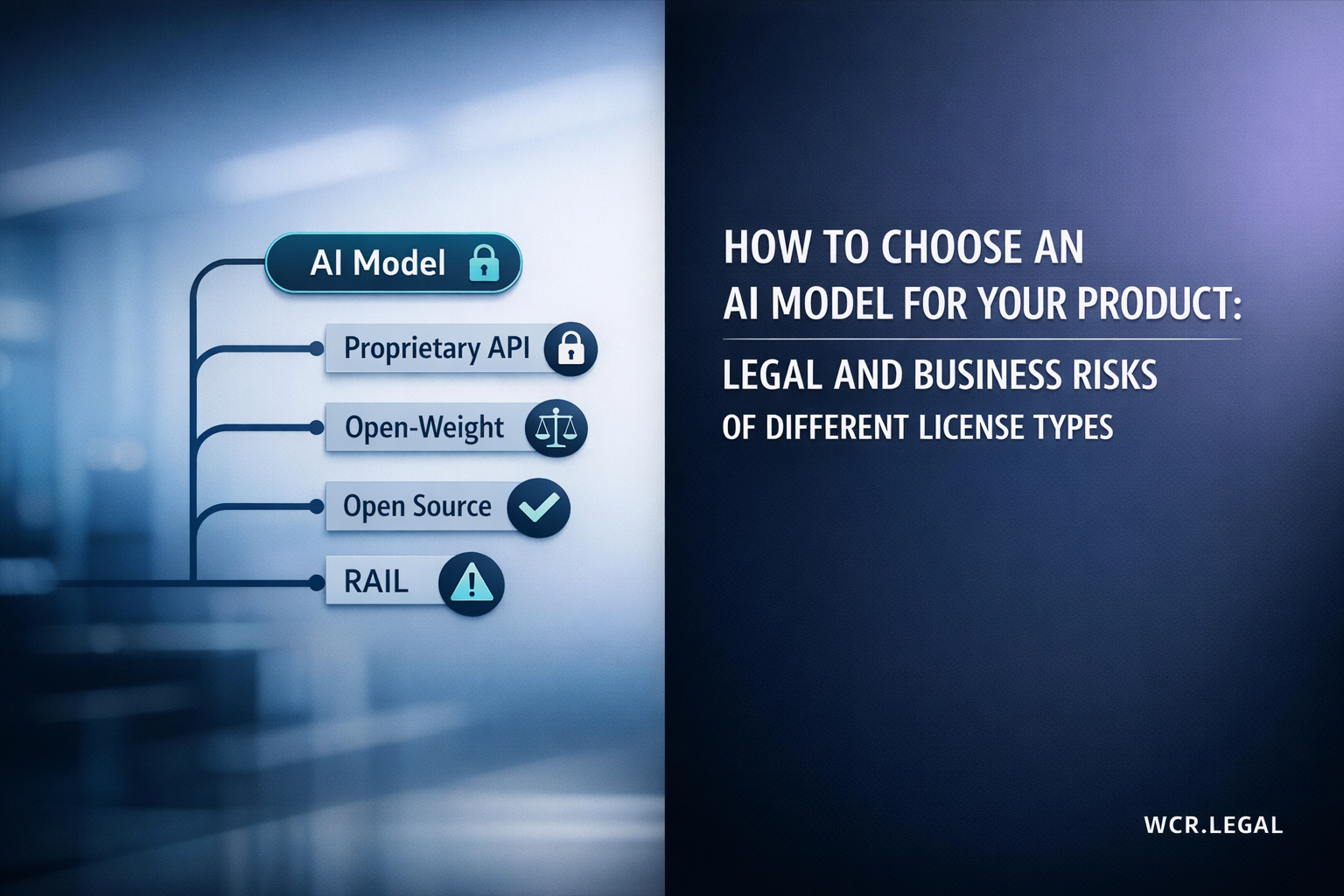

With AI, the “IP” includes training data (often multi-source, with licensing constraints on each source), model weights (which may be built on a licensed base model), fine-tuning datasets (proprietary or third-party), and prompts and inference logic — each with different ownership logic and different transferability constraints. Assigning “the AI IP” to a holdco requires first mapping what the IP actually is and whether each component is freely assignable. Many founders have not done this work.

An AI company serving EU users is subject to the EU AI Act regardless of where it is incorporated. A company using personal data for model training is subject to GDPR regardless of server location. A company operating an AI system in financial services, healthcare, or critical infrastructure faces sector-specific AI regulation regardless of its registered address.

Jurisdiction choice affects tax treatment and some compliance costs — but it does not eliminate regulatory exposure in target markets. Founders who structure primarily for tax efficiency without mapping regulatory obligations first often discover late-stage that their structure does not reduce their compliance burden at all.

Under EU AI Act high-risk classification, GPAI (General Purpose AI) rules, or sector-specific AI regulation in financial services, healthcare, or critical infrastructure, the model is not just software — it is a regulated product. This affects where it can be deployed, what technical documentation and conformity assessments are required, and critically, who bears liability when things go wrong.

This means the IP holding structure is not just a tax question — it is also a liability architecture question. Where the model sits in the corporate structure determines who is legally responsible for its outputs and who faces regulatory action if the model causes harm. AI IP ownership analysis must precede any structural decisions.

Where to hold the AI model and IP — the core decision

Choosing where to locate your AI IP ownership entity involves weighing tax efficiency, investor credibility, regulatory posture, and substance requirements. The four common jurisdictions each offer a distinct profile — none is universally correct.

Where to operate — regulatory exposure by market

Regulatory obligation is determined by where your AI system operates and who it affects — not where your company is registered. This is the most consequential misunderstanding founders make when planning their cross-border structuring. The three primary markets have materially different regulatory profiles in 2026.

- EU AI Act applies to any AI system placed on the EU market or affecting EU users — extraterritorial regardless of incorporation location

- High-risk system obligations and GPAI (General Purpose AI) rules apply to all providers and deployers targeting the EU, not just EU-incorporated entities

- Operating entity in EU simplifies compliance administration — notified body engagement, conformity assessments, local representative requirements — but does not create the regulatory obligation

- GDPR applies independently as a parallel obligation for any AI system processing EU personal data, including training data

- No federal AI Act equivalent as of 2026 — federal regulation remains sector-specific: FTC (consumer protection and unfair practices), SEC (AI in financial advice), FDA (AI in medical devices)

- State-level AI laws are emerging: Colorado AI Act and California proposals are most significant — compliance obligations vary by state and use case

- US operating entity is often required for enterprise sales and banking relationships — but this is a commercial necessity, not a regulatory compliance driver in most cases

- Regulatory trigger is sector and use case, not incorporation jurisdiction — a US C-Corp does not reduce compliance exposure if your AI product falls within a regulated sector

- UAE AI Strategy and ADGM AI regulatory framework are actively developing in 2026, but the overall regulatory environment remains materially lighter-touch than the EU

- VARA (Virtual Assets Regulatory Authority) rules apply if your AI product intersects with crypto or virtual asset financial services — this is a meaningful carve-out for AI fintech

- Good jurisdiction for Middle East-focused AI businesses with a financial services component — ADGM provides regulatory standing and banking relationships for MENA market entry

- ADGM Financial Services Regulatory Authority increasingly active on AI governance — expect more detailed requirements to develop through 2026 and beyond

Regulatory exposure follows your users and your use case — not your registered address. Structure for tax efficiency, but do not assume your incorporation jurisdiction changes your compliance obligations in markets where you deploy AI systems. The question is not “where am I registered” but “where does my AI operate and who does it affect.” Our AI law practice advises on market-specific compliance obligations before and after structural decisions are made.

Liability architecture: separating the model from the product

The most important structural decision for AI companies is not which jurisdiction to use — it is how to separate the model IP from the product layer that deploys it. This separation determines who bears liability when an AI system causes harm, who faces regulatory action, and what an acquirer actually buys. Our AI structuring & investments team advises on this architecture at every stage.

Decision framework: matching structure to stage

The right structure depends on where you are, not on some idealised end-state. Complexity that makes sense at Series B can be a distraction and a cost at pre-revenue. This framework gives you the decision logic by stage — with one consistent thread: IP ownership documentation matters from day one regardless of how simple the structure is.

Use a single entity in the fastest jurisdiction for banking and investor credibility — typically Delaware (US), UK Limited, or a UAE entity depending on your investor base and target market. The structure complexity is not worth the overhead at this stage.

What does matter: document IP ownership from day one. Who built what. Founder assignment agreements signed and in place. Training data provenance recorded — what data was used, under what licence, for what purpose. Employment agreements with IP assignment clauses covering all contributors. This documentation is the foundation of everything that comes later — at funding, at M&A, and if you ever face a regulatory audit. Structure is less important than documentation at this stage.

When the model is generating revenue and becoming a genuinely valuable asset, consider separating an IP holdco from the operating company — particularly if an exit is on the horizon or a funding round is approaching where acquirers or investors will conduct IP due diligence.

Choose the holdco jurisdiction based on investor requirements and target acquirer profile — not purely on tax rate. A UAE holdco may be tax-efficient but may require additional legal comfort for a US or European institutional investor. A Singapore holdco may be preferred by Asian investors. An Irish holdco may be the default for EU-facing businesses expecting EU acquirers. Tax is a factor; it is not the only factor. At this stage, get a holding and IP structuring assessment done before the round closes.

When deploying AI systems into multiple regulated markets, set up operating entities in each relevant regulatory zone. IP holdco licences the model to each operating entity. Intercompany agreements are drafted, arm’s-length priced, and documented — not left to a one-line board resolution.

Per-market compliance assessment must be completed before launch, not after. The EU AI Act classification of your system, whether it triggers high-risk obligations or GPAI rules, what local representation requirements apply — these need to be resolved before you have EU users, not 6 months after. The cost of getting it wrong after launch is materially higher than the cost of a pre-launch regulatory assessment. Our cross-border structuring services cover this multi-entity buildout in full.

If your AI system is classified as high-risk under the EU AI Act — autonomous driving, medical device AI, credit scoring, employment screening, critical infrastructure — or operates in financial services, healthcare, or a sector with existing AI-specific regulation: get a regulatory opinion before finalising your corporate structure.

The compliance architecture drives the corporate structure in these cases, not the other way around. The obligations that apply — conformity assessment requirements, technical documentation, notified body engagement, post-market monitoring — determine where the accountable entity must be, what it must be capable of, and whether a holding structure is even appropriate for the model-deploying entity. Structuring first and asking regulatory questions later is the most expensive version of this process. See our AI governance & risk practice for regulated sector AI structuring.

Post Comment