Why Big Tech Is Giving AI Models Away (Almost) for Free: The New Wave of Permissive Licenses

Why Big Tech Is Giving AI Models Away

(Almost) for Free

Meta, Google, Microsoft and Mistral are releasing some of the world's most powerful AI models under permissive licences — for anyone to use, modify, and deploy. This post explains the real business logic behind the strategy, decodes what "permissive" actually means legally, and sets out the hidden risks for businesses and developers who rely on these models.

-

1The Open-Source AI Revolution — What Is Actually HappeningFrom GPT-4's closed walls to LLaMA 3, Mistral and Gemma — how the model-release landscape shifted after 2023 and what "open weights" really means.›

-

2Why Big Tech Gives Models Away — The Real Business LogicDeveloper ecosystem lock-in, cloud revenue, regulatory positioning and competitive strategy: the six reasons trillion-dollar companies release free AI.›

-

3Decoding AI Licences — What "Permissive" Really Means and What It Doesn'tApache 2.0, LLaMA 3, Gemma, Mistral — a plain-language breakdown of what each licence actually permits, restricts, and leaves dangerously unresolved.›

-

4Legal Grey Zones — Copyright, Training Data and Downstream LiabilityWho is liable when an open model causes harm? What does "as is" really mean? The IP, copyright and liability questions these licences deliberately leave open.›

-

5EU AI Act and the GPAI Model Rules — What Open-Source Providers Must Still DoThe EU AI Act's partial open-source exemption explained — what obligations apply regardless, the systemic risk threshold, and how deployers inherit regulatory duties.›

-

6What This Means for Businesses — A Legal Due Diligence ChecklistBefore your company deploys an open AI model: the five legal questions to answer, the compliance risks to manage, and how AI law counsel can help.›

In early 2023, the dominant narrative in AI was one of secrecy. OpenAI had released GPT-4 as a fully closed model — no weights, no architecture details, no access without an API key. Google followed a similar path with Gemini. The assumption was that the most powerful AI models would remain proprietary assets, accessible only through controlled commercial interfaces. That narrative collapsed faster than almost anyone predicted.

Within eighteen months, Meta released LLaMA 2 and then LLaMA 3 — models competitive with the best commercial offerings — under licences that allowed free commercial use. Mistral AI released Mistral 7B under Apache 2.0, one of the most permissive software licences that exists. Google released Gemma. Microsoft released the Phi series. Falcon, from the Technology Innovation Institute in Abu Dhabi, became one of the most downloaded models in history. The world went from a handful of gated models to a landscape where dozens of frontier-class AI systems were publicly downloadable.

When a company spends hundreds of millions of dollars training an AI model and then releases it for free, the question is not whether they are being generous — it is what they gain in return. The open-release strategy is one of the most calculated moves in the technology industry's recent history. Understanding it matters not just for business strategy, but for understanding the legal and regulatory implications that follow.

| Stakeholder | What they gain | What they give up | Net position |

|---|---|---|---|

| Meta (LLaMA) | Ecosystem lock-in, regulatory goodwill, research talent, competitive disruption of OpenAI | Model weights (which cost $100M+ to train) | Strong positive |

| Google (Gemma) | Developer mindshare, cloud compute revenue, ability to compete in both open & closed segments | Some model capability disclosure | Positive |

| Microsoft (Phi) | Azure cloud consumption, enterprise developer adoption, research positioning | Small model weights (Phi is relatively compact) | Positive (low cost) |

| DeepSeek | Global talent attraction, credibility, geopolitical soft power for Chinese AI | Model weights and architectural insights | Strategic — long-term gain unclear |

| Startups & developers | Frontier AI capability without API costs; ability to fine-tune for niche use cases | Must manage compliance, compute, and licence obligations independently | Mixed — benefit with hidden costs |

| OpenAI / Anthropic | Nothing — they lose the premium pricing power of proprietary frontier models | Revenue from API access as open alternatives approach parity | Negative pressure |

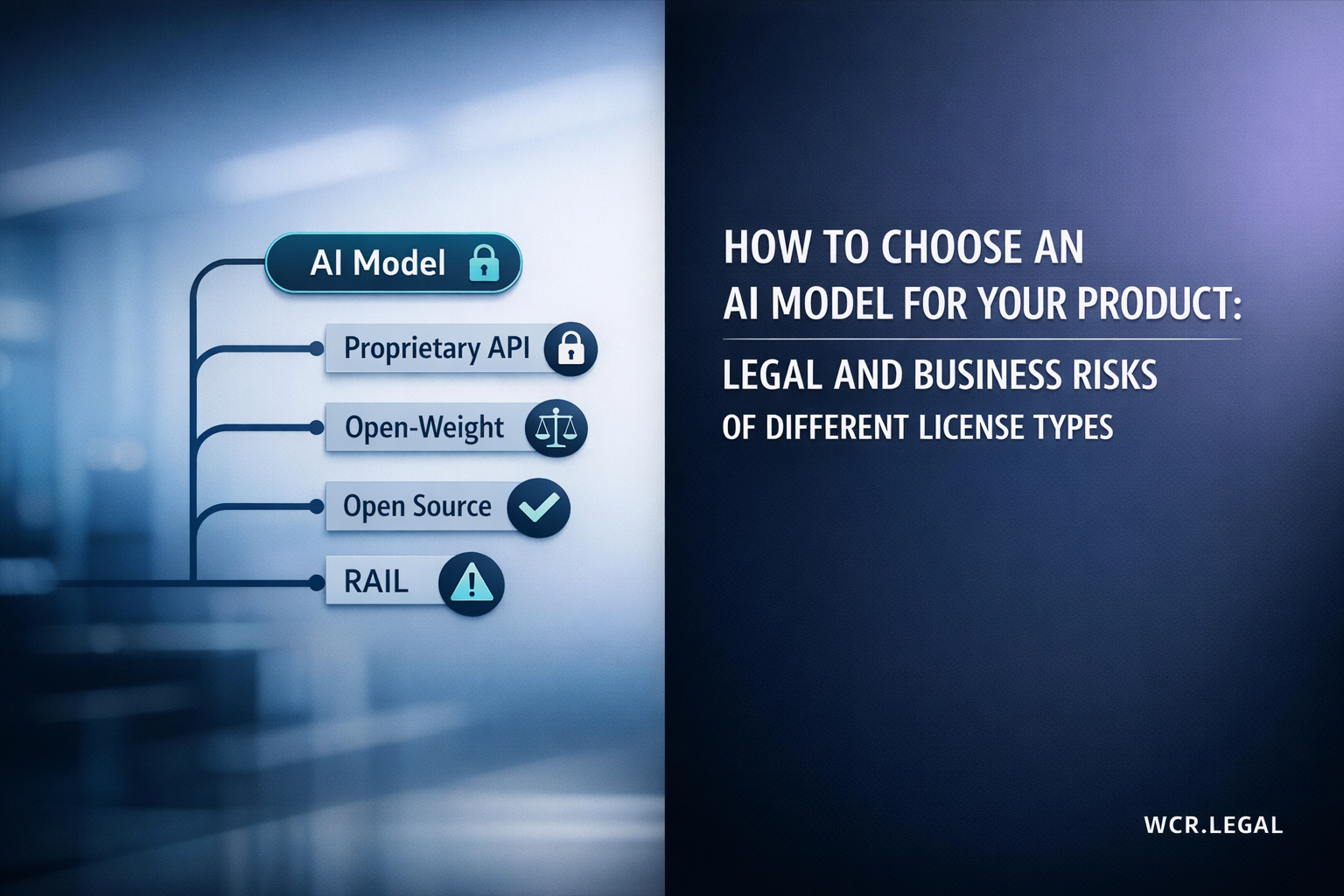

The word "permissive" in an AI licence context is doing a great deal of legal work — and businesses that take it at face value are taking on significant legal exposure. A permissive software licence like Apache 2.0 means something very specific and well-understood in software law. But most AI model releases do not use Apache 2.0 — they use custom licences that borrow the feel of permissiveness while containing restrictions that can materially affect how the model can be used commercially. Here is what each major licence actually says, in plain language.

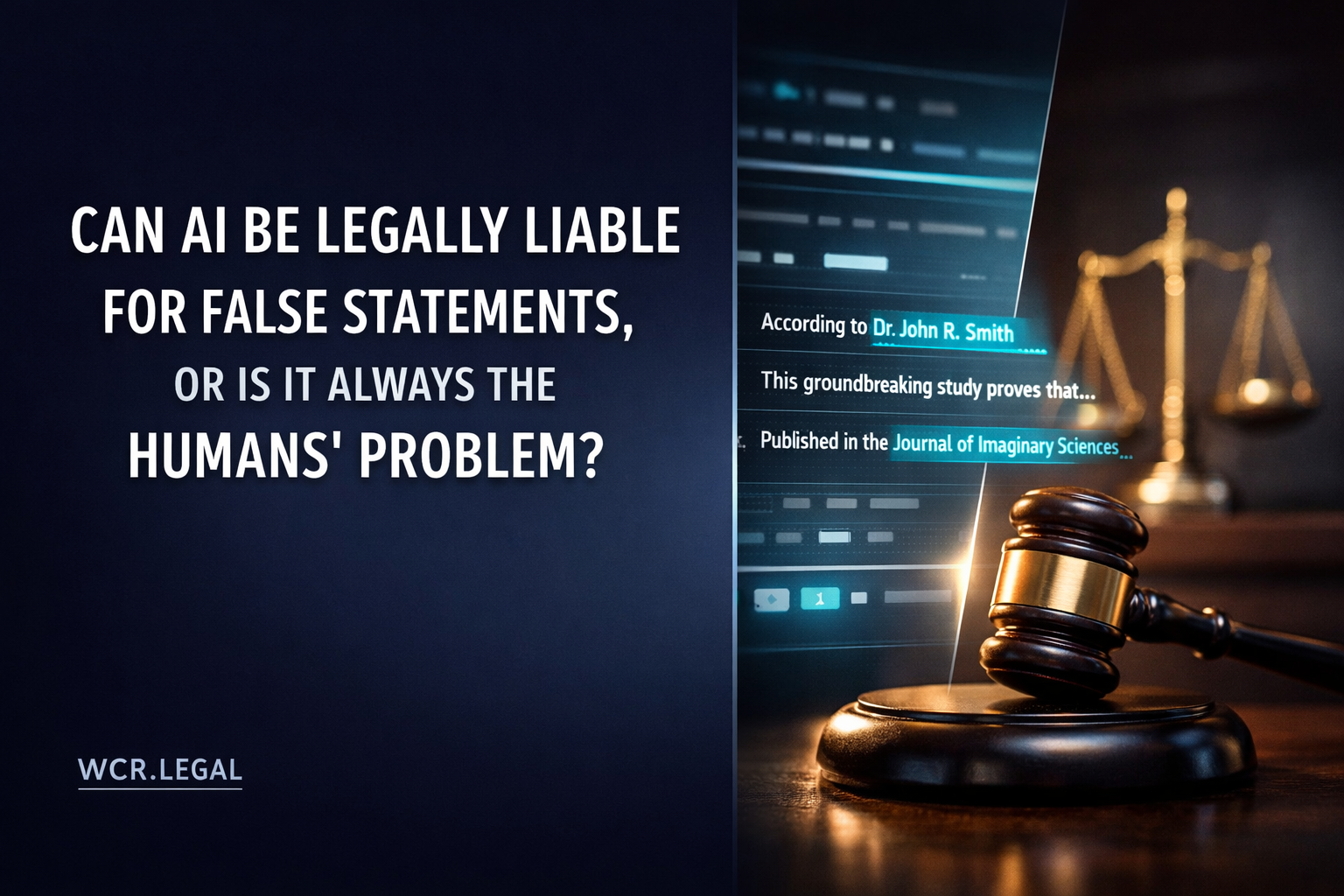

Open AI model licences are remarkably good at defining what the model provider will allow you to do. They are remarkably poor at clarifying who bears liability when the model does something harmful, whether the training data was lawfully obtained, and what happens to the intellectual property in outputs the model generates. These are not gaps in the licence by oversight — they are deliberate allocations of risk away from the model provider and onto the deployer. Understanding what the licence does not say is as important as reading what it does.

(Meta, Mistral, Google)

(Hugging Face community, B2B model vendors)

(SaaS companies, enterprises, developers)

(consumers, B2B customers)

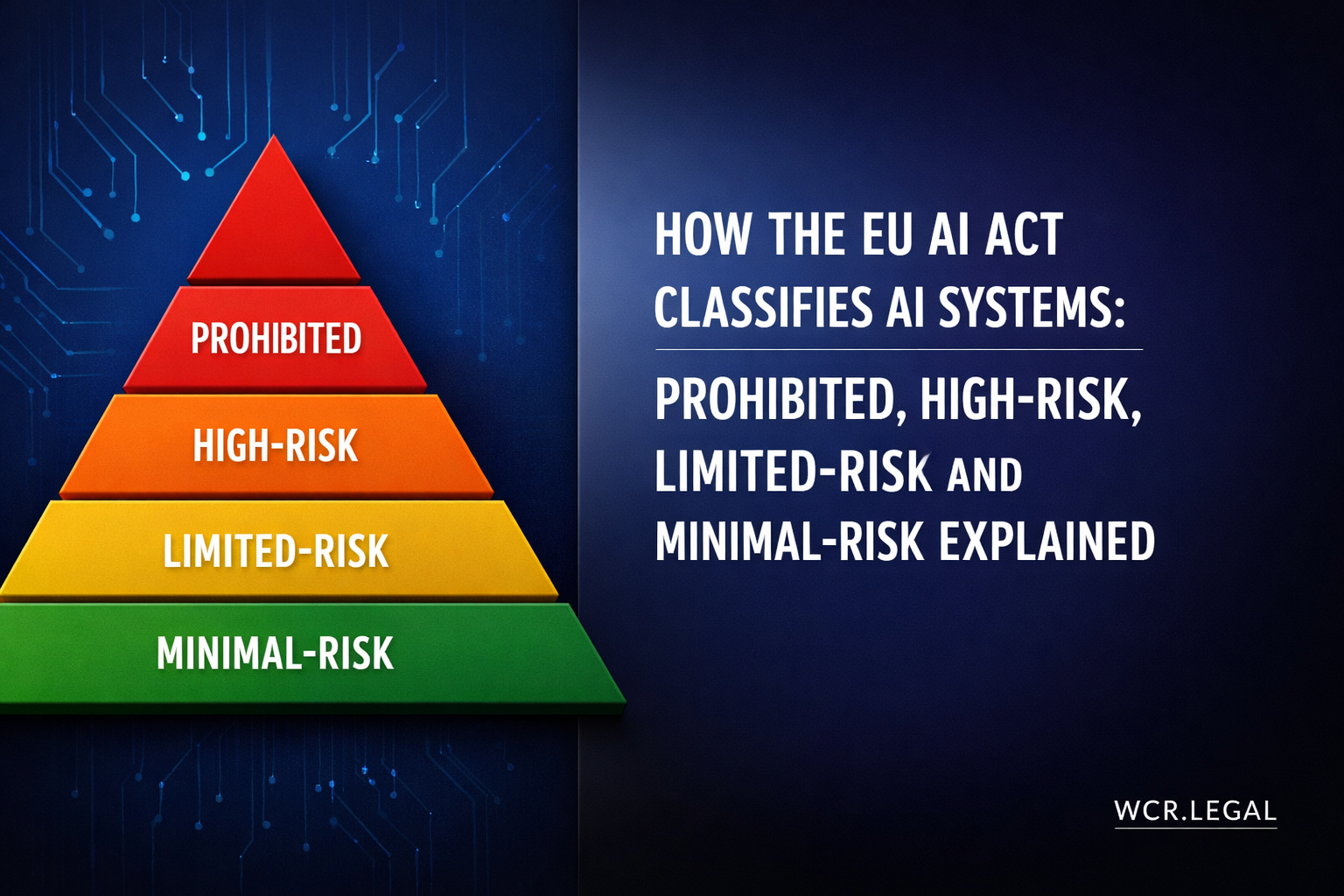

The EU AI Act, which entered force in August 2024 with GPAI obligations applying from August 2025, creates a new regulatory category specifically for large AI models: General Purpose AI (GPAI) models. This category directly affects the most powerful open-weight models — LLaMA 3 405B, Mistral Large, and any model with systemic risk potential. Critically, the EU AI Act provides only a partial exemption for open-source models — and most businesses operating in the EU will find that the exemption does not eliminate their compliance obligations, because they are deployers, not providers.

53(1)(a)

53(1)(c)

55(1)(a)

55(1)(b)

The open AI model landscape offers extraordinary opportunities: frontier-class AI capability at a fraction of the cost of proprietary API access, full customisability through fine-tuning, and freedom from vendor lock-in. But the legal and regulatory overhead that comes with deployment is real, growing, and often underestimated. The five questions below are the starting point for any serious legal due diligence on open AI model deployment — and the checklist that follows should be completed before any open model goes into production.