How the EU AI Act Classifies AI Systems: Prohibited, High‑Risk, Limited‑Risk and Minimal‑Risk Explained

How the EU AI Act Classifies AI Systems: Prohibited, High‑Risk, Limited‑Risk and Minimal‑Risk Explained

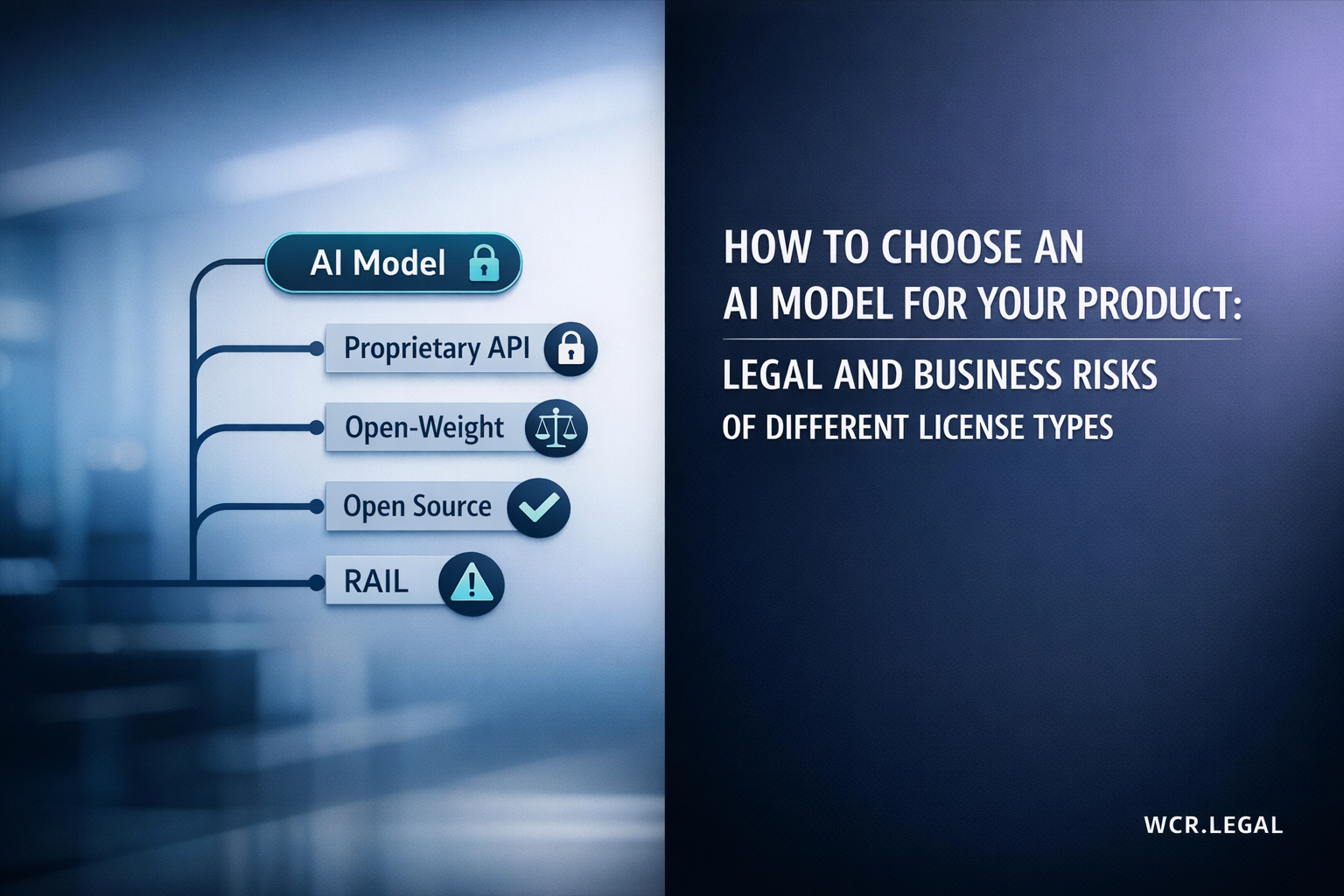

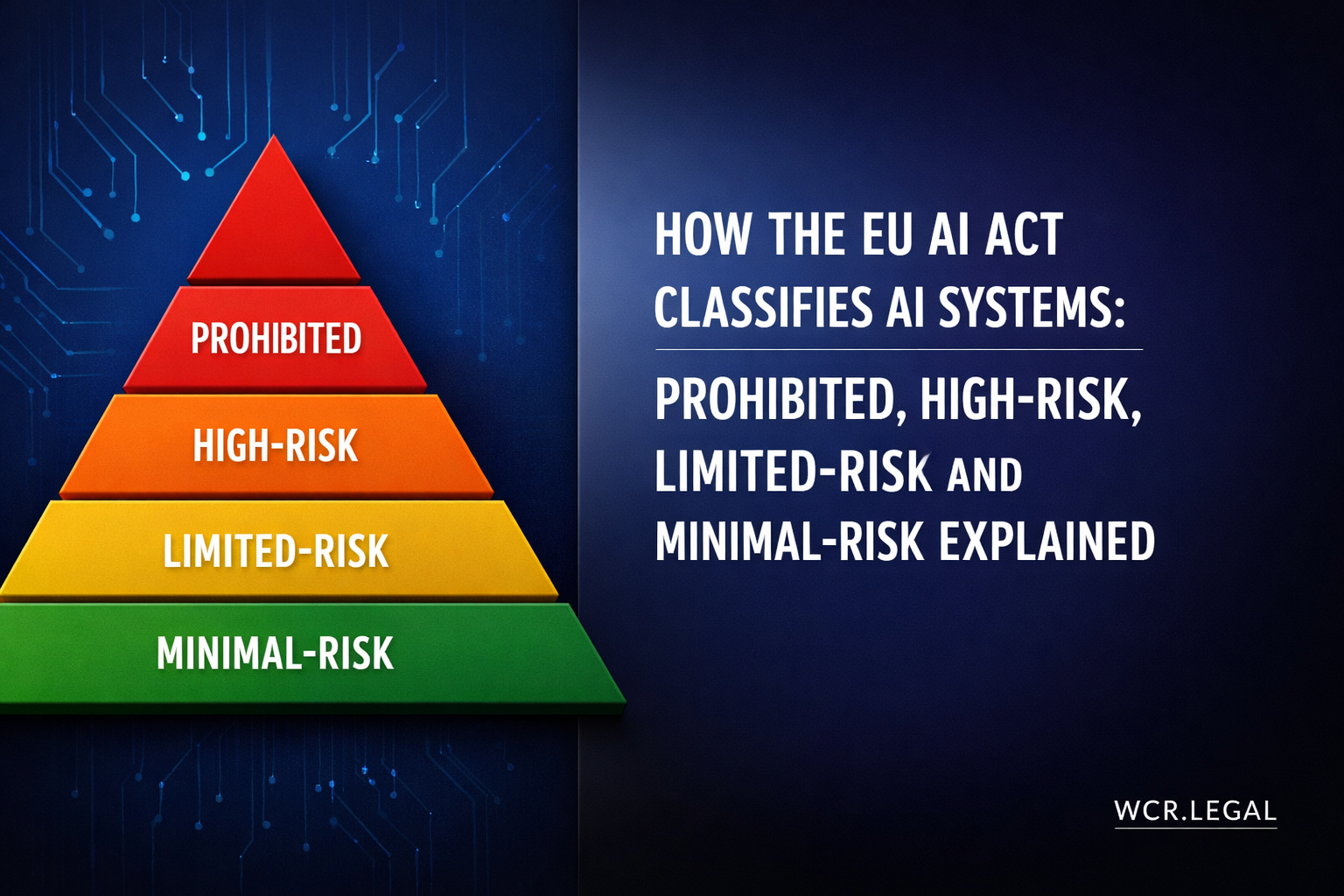

The EU AI Act does not regulate all artificial intelligence the same way. Instead, it uses a four-tier risk-based framework — ranging from outright prohibition to entirely voluntary compliance — to match the intensity of regulatory requirements to the severity of potential harm. Understanding which tier applies to your AI system is the first and most consequential step in any EU AI Act compliance programme.

Section 1 — The Risk-Based Architecture: Purpose and Legal Design

The EU AI Act's central innovation is its rejection of blanket AI regulation in favour of a graduated, risk-proportionate framework. Rather than imposing the same obligations on an AI-powered chess app and a facial recognition system used by border authorities, the Act calibrates the intensity of regulation to the potential severity of harm to fundamental rights, health, safety, and democratic processes. This architecture — built on four tiers ranging from absolute prohibition to entirely voluntary compliance — is what makes the AI Act structurally different from every prior attempt at AI governance.

The Four Tiers at a Glance

Absolute ban. Eight specific AI practices are prohibited regardless of claimed purpose or benefit. No conformity assessment, no exemption process, no transition period beyond the initial implementation window. Any system that falls within Article 5 must be discontinued or never placed on the market.

Examples: social scoring by public authorities, subliminal manipulation causing harm, exploitation of vulnerabilities, most real-time biometric identification in public spaces for law enforcement.

Permitted but heavily regulated. High-risk AI systems may be placed on the EU market but only after satisfying a comprehensive set of pre-market and ongoing obligations — conformity assessment, technical documentation, CE marking, EU database registration, QMS, and post-market monitoring.

Covers AI embedded in safety-critical products (Annex II) and AI in eight sensitive sectors including biometrics, employment, law enforcement, and access to essential services (Annex III).

Permitted with specific transparency obligations. Systems in this tier interact directly with people (chatbots, emotion-detection tools, synthetic media generators) but do not carry the systematic risks of high-risk AI. The single mandatory obligation is transparency: affected persons must be informed they are interacting with, or subject to, an AI system.

Penalties for non-disclosure are lower than Tier 2 (up to €15M or 3% global turnover) but remain significant.

Permitted with no mandatory AI Act obligations. The vast majority of AI applications in current commercial use fall in this tier — spam filters, recommendation engines, AI-assisted content creation tools, predictive text, and most AI in video games and productivity software.

Providers and deployers may voluntarily adhere to codes of conduct (Article 95) and the EU AI Pact, but there is no legal compulsion to do so.

How the AI Act Interacts with Existing EU Product Law

The AI Act is built on top of the EU's existing New Legislative Framework (NLF) for product safety — the same legal architecture used for medical devices, machinery, toys, and construction products. This integration is deliberate: many high-risk AI systems are embedded in physical products already regulated under sectoral EU law, and the AI Act avoids creating parallel obligations that would duplicate that existing framework.

Which Actors Are Most Affected by Which Tiers

The four tiers do not affect all actors equally. Providers of high-risk AI systems bear the heaviest burden. Deployers of the same systems carry a secondary but independent set of obligations. Importers and distributors have lighter duties, primarily related to verification and compliance checking. The table below summarises which tier creates significant obligations for which supply chain actor.

Section 2 — Prohibited AI: Article 5's Hard Limits

Article 5 of the EU AI Act establishes the absolute floor of AI regulation: eight categories of AI practice so harmful to fundamental rights, human dignity, and democratic values that no justification — commercial, scientific, or governmental — can outweigh the prohibition. Unlike every other tier in the Act, there is no conformity pathway, no exemption process, and no derogation available under the general safety framework. A system falling within Article 5 may not be placed on the market, put into service, or used in the European Union.

AI systems that deploy subliminal techniques beyond a person's consciousness, or other manipulative or deceptive techniques that exploit psychological weaknesses, to distort a person's behaviour in a way that causes or is likely to cause significant harm are prohibited. The prohibition covers both direct harm to the individual and harm to third parties.

This extends beyond purely subliminal (sub-perceptual) techniques to include any approach that bypasses rational agency through deception or exploitation of cognitive bias.

AI systems that exploit the vulnerabilities of a specific group of persons — due to their age, disability, or social or economic situation — through techniques that distort their behaviour in a way that causes or is likely to cause significant harm to that person or another person are prohibited.

This prohibition is distinct from the general manipulation ban: it specifically addresses targeted exploitation of structural vulnerability rather than generic deceptive AI. An AI marketing system that specifically targets persons with identified cognitive disabilities to drive purchases would fall within this prohibition.

AI systems used by public authorities — or on their behalf — to evaluate or classify natural persons based on social behaviour or personal characteristics over a period of time, where the scoring leads to detrimental treatment that is either disproportionate to the original behaviour or unjustifiably applied in unrelated social contexts, are prohibited.

This prohibition targets China-style social credit systems applied at population scale. It does not prohibit standard risk assessment tools used in regulated contexts (credit scoring by private lenders, fraud detection by banks) provided these are not used to make broader social determinations unrelated to the specific regulated activity.

AI systems used by law enforcement to assess the risk of a natural person committing a criminal offence based solely on profiling or personality trait assessment, rather than on objective and verifiable facts directly linked to criminal activity, are prohibited. This prohibition specifically covers "pre-crime" prediction tools.

The prohibition does not extend to AI systems that support human assessment of offending risk where that assessment is grounded in documented behavioural history and verified facts — the specific target is automated personality-based prediction without factual grounding.

AI systems that create or expand facial recognition databases through the untargeted scraping of facial images from the internet or CCTV footage are prohibited. This prohibition applies regardless of the stated purpose of the database.

This provision is a direct response to the databases built by companies such as Clearview AI, which scraped billions of facial images from social media to create commercially available biometric identification databases. The prohibition extends to public authority databases built the same way.

AI systems used to infer the emotional states of natural persons in the workplace and educational institutions are prohibited. This applies to employers using AI to monitor employee emotions through facial analysis, voice analysis, or physiological signals, and to educational institutions monitoring student engagement or emotional states through similar means.

AI systems that use biometric data — including physiological, behavioural, or psychological signals — to categorise natural persons to deduce or infer their race, political opinions, trade union membership, religious or philosophical beliefs, sex life, or sexual orientation are prohibited.

This prohibition extends to any system that uses observable physical characteristics as a proxy for protected class membership, regardless of claimed accuracy or stated purpose. It covers both direct and indirect inference methods.

AI systems used by law enforcement for real-time remote biometric identification — typically facial recognition in live CCTV feeds — in publicly accessible spaces are prohibited as a general rule. "Real-time" means that identification and searching happen before, during, or shortly after the biometric capture, without a significant delay that allows for human review before action.

Section 3 — High-Risk AI: Annex II, Annex III, and the Classification Rules

The high-risk tier of the EU AI Act is defined by Article 6, which sets out two distinct routes through which an AI system becomes subject to the full set of provider and deployer obligations. An AI system is high-risk if it is a safety component of a regulated product listed in Annex II — or if it independently falls within one of the eight sectors and use cases enumerated in Annex III. These two routes follow different conformity assessment pathways and interact differently with existing EU product law.

An AI system is automatically classified as high-risk under Article 6(1) if it is itself a product covered by, or a safety component of a product covered by, one of the EU harmonisation legislation instruments listed in Annex II — and that product is required to undergo a third-party conformity assessment by a notified body.

This route does not require analysis of risk to fundamental rights — classification is determined solely by the product category. If a medical device, a vehicle, or a piece of industrial machinery incorporates AI as a safety-critical component, that AI component is high-risk regardless of how it is designed or what it does.

Under Article 6(2), an AI system used in one of the eight sectors or use cases listed in Annex III is classified as high-risk — subject to the Article 6(3) filter. Unlike Annex II, classification here requires a contextual assessment: not every AI system used in these sectors is high-risk. The classification depends on what the system does and who it affects.

The eight Annex III categories are: (1) biometric identification and categorisation; (2) critical infrastructure management; (3) education and vocational training; (4) employment, workers management and access to self-employment; (5) access to essential private services and public services and benefits; (6) law enforcement; (7) migration, asylum and border control management; and (8) administration of justice and democratic processes.

A key difference from Annex II: Annex III systems do not automatically require a notified body. Most Annex III high-risk systems follow a self-assessment conformity pathway using the Annex IX checklist — the provider conducts the conformity assessment internally and signs the EU Declaration of Conformity. Only biometric identification systems used by law enforcement require a notified body under Annex III.

The Article 6(3) Filter — When Annex III Systems Are Not High-Risk

Article 6(3) introduces an important escape hatch: an AI system listed in Annex III is not classified as high-risk if it does not pose a significant risk of harm to the health, safety, or fundamental rights of natural persons. The provider must assess whether this filter applies and, if so, document that assessment — and notify the European Commission of the determination.

The AI Act specifies that an Annex III AI system is not high-risk if it satisfies at least one of the following conditions:

Key procedural requirement: a provider who concludes their Annex III system is not high-risk because it satisfies one of these conditions must document the reasoning and notify the European Commission. This notification requirement means the Article 6(3) filter is not a quiet internal decision — it creates a regulatory record that national competent authorities can scrutinise. Providers who rely on the filter without adequate documentation face the same enforcement risk as those who fail to classify a high-risk system correctly.

Conformity Assessment Pathways — Annex II vs. Annex III

Section 4 — The Eight Annex III Sectors: What High-Risk Looks Like in Practice

Annex III of the EU AI Act identifies eight sectors in which AI systems are presumptively high-risk — subject to the Article 6(3) filter discussed in Section 3. Within each sector, the Annex defines specific use cases rather than sweeping category-level inclusion. This means the classification question is not merely "is this AI used in healthcare?" or "is this AI used by law enforcement?" — it is "does this specific AI system perform the particular functions enumerated in Annex III for this sector?" The sector-by-sector breakdown below maps each category to what is in scope, what is out of scope, and the examples most likely to present classification difficulty.

Section 5 — Limited-Risk and Minimal-Risk: Transparency and the Voluntary Framework

The lower two tiers of the AI Act's risk framework reflect a deliberate policy choice: not every AI system that interacts with people warrants the heavy compliance burden imposed on high-risk systems. Limited-risk AI systems — those that create specific transparency risks without posing systematic safety or fundamental rights concerns — are subject to targeted disclosure obligations under Article 50. Minimal-risk AI systems, which represent the large majority of AI in commercial use today, face no mandatory obligations at all under the AI Act.

Limited-Risk AI — Article 50 Transparency Obligations

Article 50 of the AI Act creates four specific transparency obligations, each tied to a distinct interaction type. These obligations are primarily aimed at ensuring that people who interact with AI — or whose behaviour or state is assessed by AI — are aware they are doing so. The underlying rationale is informational autonomy: people should be able to choose how to engage with AI-generated or AI-mediated content and experiences once they know it is present.

The Article 50 deepfake and AIGC disclosure requirements carry particular significance for political communications. AI systems used to generate or edit audio, video, or images of real political figures — or to generate persuasive political text — trigger mandatory disclosure requirements regardless of whether the content would otherwise fall within the limited-risk tier. Providers of general-purpose AI models used to generate such content are required to ensure their systems technically support the labelling of AI-generated outputs (Article 50(5)). This places an obligation on foundation model providers — not only on the downstream deployers who create the actual content.

There is no minimum harm threshold for the disclosure to apply: any AI-generated deepfake requires disclosure unless it clearly falls within the artistic/satirical carve-out. The practical challenge for media organisations, political campaigns, and communications agencies is designing workflows that automatically apply the required machine-readable labels to all AI-generated or AI-edited content before publication.

Minimal-Risk AI — No Mandatory AI Act Obligations

The vast majority of AI systems currently in commercial use fall into the minimal-risk tier. There are no AI Act compliance obligations — no conformity assessment, no registration, no technical documentation, no transparency disclosure — for these systems. The Act deliberately avoids imposing costs on beneficial, low-risk AI to preserve the EU's competitiveness and innovation capacity.

Voluntary Codes of Conduct and the EU AI Pact

Section 6 — Classifying Your AI System: Step-by-Step Decision Framework

Risk classification under the EU AI Act is not a single question with a binary answer. It is a sequential decision process that must be applied system-by-system, use-case by use-case, across every AI system your organisation develops, provides, or deploys. Getting the classification right matters enormously: under-classification exposes your organisation to enforcement risk and penalties; over-classification wastes resources on compliance work that is not legally required. The framework below provides a structured, article-by-article classification methodology.

The 7-Step Classification Framework

Common Misclassification Traps

The most common misclassification error. Annex III classifies by specific use case functions, not by industry. An AI system used by a hospital is not automatically high-risk; an AI system that performs triage scoring that influences admission decisions likely is. Always identify the precise function the AI performs and match it to the specific text of Annex III — not the general sector heading.

Having a human review AI output does not automatically remove a system from the high-risk classification. Annex III captures systems that "make or significantly influence" decisions — even advisory or recommender systems can meet this threshold if their recommendations are routinely followed or if the human reviewer lacks meaningful ability to assess or override them. Human oversight is a compliance obligation for high-risk systems, not a classification escape hatch.

The Article 6(3) "not high-risk" filter requires documented reasoning and Commission notification — it is not a quiet internal decision. Providers who rely on the filter without a documented assessment and notification are effectively self-classifying as lower than high-risk without the procedural protections that make that determination defensible. Best practice: treat the filter analysis as a mini-conformity assessment — document why each of the four criteria is or is not satisfied, and keep the record available for national authority inspection.

A deployer that substantially modifies a high-risk AI system, or that puts a general-purpose AI system to a high-risk use case not covered by the original provider's conformity assessment, is reclassified as a provider under Article 25. This catches companies that fine-tune, retrain, or materially adapt third-party AI systems and then deploy them in high-risk contexts — the downstream company inherits all provider obligations. This is particularly relevant for enterprise deployers using third-party AI models and customising them for regulated industries.

Classification Grey Areas — GPAI, Bundled AI, and SaaS Deployment

Need expert help classifying your AI systems under the EU AI Act?

AI Act classification is the foundation of every compliance programme — and the analysis is rarely straightforward. Our AI law team advises technology providers, enterprise deployers, and non-EU organisations on system-by-system classification assessments, Article 6(3) filter documentation, Commission notification procedures, and the full high-risk compliance pathway from technical documentation to CE marking and EU database registration. We work across all eight Annex III sectors and across both Annex II product-embedded and standalone AI systems.

Speak to our AI Law Team →