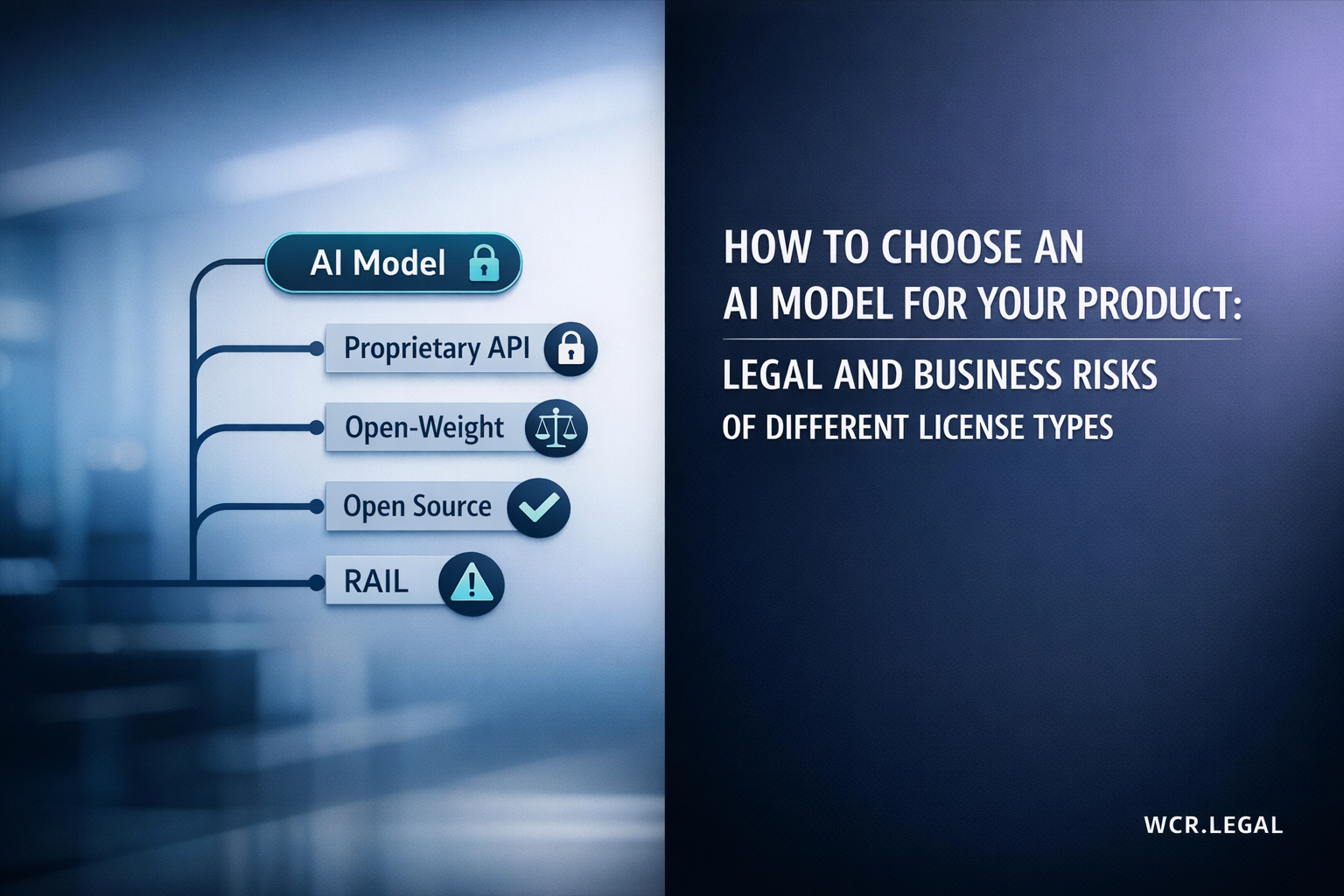

What Happens to the License When You Fine‑Tune a Model

What Happens to the License When You Fine‑Tune a Model?

Fine-tuning a model does not create a blank-slate licence. The original model's terms follow your adapted weights, LoRA adapters, and any service built on top of them — often in ways developers do not anticipate until distribution or fundraising.

Introduction — The Licence Does Not Reset When You Fine-Tune

Fine-tuning is widely understood as a technical process: you take a pre-trained model, continue training on a curated dataset, and the result is a model better suited to your use case. What is less well understood is the legal effect of that process. The fine-tuned model, the LoRA adapter, and any product built on either of them carry the original model's licence forward — often with additional obligations triggered by the fine-tune itself.

The assumption that further training creates a new, independently owned model with a clean licence is one of the most common — and most commercially significant — mistakes in AI product development. It surfaces at the worst possible moments: when distributing a fine-tuned model publicly, when closing an enterprise contract that requires licence warranties, and during M&A due diligence when a buyer's legal team reviews the IP chain of a product's core model.

Three Common Misconceptions About Fine-Tuning and Licences

"Fine-tuning creates a new model I own outright"

Fine-tuning modifies the base model's weights — it does not create an independent model. The fine-tuned weights are, at minimum, a derivative work that remains bound by the base model's licence. Ownership of the fine-tune is constrained by what the base licence permits you to own and distribute.

"LoRA adapters are just training data — they're not a model"

LoRA (Low-Rank Adaptation) adapters contain learned weight modifications specific to the base model. They are not standalone — they only function when loaded alongside the base model. Distributing a LoRA adapter effectively distributes a modified version of the base model, and the base licence's distribution rules apply.

"I'm using it as a service — the licence is irrelevant"

Running a fine-tuned model behind an API may avoid licence provisions that are triggered only by distribution of weights. But use-case restrictions, flow-down obligations, and competitor clauses in licences like Llama 3 and Gemma apply regardless of deployment method — API, SaaS, or embedded product.

Where the Legal Questions Cluster

Four Factors That Determine Licence Exposure After Fine-Tuning

Base model licence type

Apache-2.0, custom AUP (Llama/Gemma), or proprietary — each creates a different baseline

Fine-tuning method

Full fine-tune vs LoRA adapter vs RLHF — affects whether weights are considered a derivative

Deployment model

Internal use, weights distribution, API service, or embedded in a product — each triggers different clauses

Training dataset licence

CC-BY, CC-BY-SA, proprietary, or scraped data — each carries potential obligations to the resulting model

Note on IP ownership: The question of who owns the weights of a fine-tuned model intersects with broader AI IP ownership questions — including whether training outputs are protectable as copyright and how ownership is structured for products built by teams using multiple base models. For background on AI IP ownership frameworks, see AI IP Ownership — wcr.legal.

Section 1 — How the Original Model Licence Applies to Fine-Tunes and LoRA Adapters

The moment you start fine-tuning a model, the base model's licence governs what you can do with the result. The three licences that matter most for commercial AI development — Meta's Llama 3 Community License, Google's Gemma Terms of Use, and the Apache-2.0 licence covering Mistral models — handle fine-tuned derivatives differently, with implications that reach from weight distribution through to enterprise product agreements.

Llama 3 — Community License

Derivative works permitted under the Llama 3 licence only

The Llama 3 Community License explicitly addresses fine-tuned derivatives. A model produced by fine-tuning Llama 3 is a "Llama 3 derivative" and must itself be distributed under the Llama 3 Community License — not under Apache-2.0, MIT, or any other licence. This means every downstream user of your fine-tune inherits the same restrictions you are subject to: the Acceptable Use Policy, the 700M MAU threshold, the competitor restriction, and the training ban.

Practically, this limits your commercialisation options. You cannot take a Llama 3 fine-tune and distribute it as if it were your proprietary model with clean IP — the licence follows the weights. However, running the fine-tuned model as a closed API service (where you do not distribute the weights) is permitted under the licence, subject to the use-case restrictions continuing to apply to that service.

Yes — all methods

Llama 3 only

Not permitted

Permitted

Yes — all derivatives

Applies to fine-tunes

Gemma — Terms of Use

Flow-down obligation requires PUP transmission to all downstream recipients

Gemma's Terms of Use treat fine-tuned models as derivatives bound by the same terms. The most commercially significant implication is the flow-down obligation: if you distribute a Gemma fine-tune or run a product built on one, you must ensure that your downstream users — including enterprise clients receiving an API service — operate within the Prohibited Use Policy. This transforms a licensing obligation into a contract management obligation at every layer of your distribution chain.

Google retains unilateral termination rights for any breach of the Terms of Use — a power that extends to breaches by your downstream users if you have not adequately implemented the flow-down. The combination of flow-down obligation and unilateral termination means a compliance failure by one of your enterprise clients could, in theory, expose your own licence to termination.

Yes — all methods

Gemma ToU

Yes — to all users

Permitted + PUP applies

Google retains

Yes — continued use = accept

Mistral — Apache-2.0

Derivatives may be distributed under any licence including proprietary

Mistral's publicly released models (Mistral 7B, Mixtral 8x7B) are distributed under Apache-2.0 — the most permissive framework for fine-tuning. Apache-2.0 permits derivative works to be distributed under any licence, including a proprietary licence that closes the fine-tuned weights entirely. There is no flow-down obligation, no use-case restriction, and no scale threshold. The base licence includes a patent grant covering the licensed code.

The practical implication for product development is significant: a Mistral fine-tune can be proprietary, can be distributed under a custom licence, and can be transferred in an M&A transaction without the licence chain issues that arise with Llama 3 or Gemma derivatives. This is the reason Apache-2.0 models are consistently preferred in enterprise and regulated industry product stacks where IP clarity is a procurement or investment requirement.

Yes — all methods

Any — incl. proprietary

None

Permitted, unrestricted

Included

No — irrevocable grant

LoRA Adapters — Are They Covered by the Base Model Licence?

Why adapter files are not independent of the base model's terms

What makes a LoRA adapter a derivative

A LoRA (Low-Rank Adaptation) adapter is a set of lightweight weight matrices trained to modify a specific base model's outputs. The adapter has no standalone function — it must be merged with or loaded alongside the base model weights to produce any output. From a licence perspective, distributing a LoRA adapter for a Llama 3 or Gemma model is functionally equivalent to distributing a modified version of those weights, because the adapter only exists in relationship to the base model.

What this means in practice

Sharing a LoRA adapter on Hugging Face, GitHub, or via a download link triggers the base model's distribution provisions — the same rules that would apply to sharing full fine-tuned weights. For Llama 3, the adapter must be accompanied by the Llama 3 licence. For Gemma, the flow-down obligation applies to anyone who receives and uses the adapter. For Mistral/Apache-2.0, the adapter can be shared under any licence. Treating LoRA adapters as "just training artefacts" rather than distributed model derivatives is a common compliance gap.

Fine-Tuning Method vs Licence Coverage — Summary Matrix

Section 2 — Internal Use vs Distributing Your Model or Service

The distinction between using a fine-tuned model internally and making it available to others — whether as downloadable weights, a deployed API, or an embedded product — is the single most important variable in determining which licence obligations are activated. For some provisions, internal use creates no obligation at all. For others, the restriction applies regardless of whether anyone outside your organisation ever touches the model.

Understanding exactly where the "distribution trigger" falls for each licence requires treating each deployment scenario separately. The same fine-tuned model can move from zero compliance obligations (internal research) to significant downstream obligations (public release) with a single deployment decision.

Internal use — obligations at minimum

Running fine-tuned weights within your own infrastructure

When a fine-tuned model is used exclusively within your organisation — for internal tooling, research, evaluation, or employee-facing products — the licence provisions that are triggered by distribution do not apply. For Llama 3, the weight distribution rules, relicensing restrictions, and derivative model disclosure requirements only activate when you share the weights or product with external parties.

However, use-case restrictions are not gated by distribution. The Llama 3 competitor clause and Gemma's Prohibited Use Policy apply to internal use just as they apply to deployed products. Running a fine-tuned Gemma model to assist with tasks that fall within the PUP prohibition — even for internal employees — constitutes a breach of the licence.

Key boundary: "Internal" means employees and contractors working under your organisation's supervision, on your infrastructure, for your organisation's purposes. Sharing a model with a subsidiary, joint venture partner, or outsourced team may constitute distribution depending on the licence's definition of "affiliate".

Distribution — full obligation stack activated

Making weights or model outputs available externally

"Distribution" in model licence terms covers more than publishing weights on Hugging Face. It includes: making weights downloadable by any third party, bundling a model into a software product delivered to customers, providing API access to a model (for licences that treat API provision as distribution), and transferring weights in an M&A transaction or investment structure.

For Llama 3, distribution of fine-tuned weights requires the recipient to receive the Llama 3 Community License. For Gemma, distribution activates the flow-down obligation — the recipient must be contractually bound to the PUP before they can legally use the derivative. For Mistral/Apache-2.0, distribution can occur under any licence with only an attribution requirement.

The API grey area: Running a model as an API service is not weight distribution in the conventional sense — users interact with the model but do not receive the weights. Most major model licences treat this as a commercial use scenario rather than a distribution scenario, meaning weight-specific provisions (derivative licensing, source disclosure) may not apply. But use-case and flow-down provisions continue to apply to API-delivered services.

Distribution Trigger — What Activates Which Obligations

M&A and fundraising note: In due diligence for a startup whose core product is built on a fine-tuned Llama 3 or Gemma model, the question is not just "what licence does the model ship under?" — it is "does the licence bind the acquirer, and on what terms?" For Llama 3 and Gemma derivatives, the acquirer inherits the same licence constraints as the seller, including the 700M MAU clause and PUP flow-down. This is a material term that must be disclosed in the IP schedule and reviewed by the buyer's legal team as part of standard AI IP due diligence.

Section 3 — Ownership Questions Around Weights After Further Training

Fine-tuning a model generates new weight values that did not exist before. The natural assumption is that the entity performing the training owns what it creates. The reality is more nuanced: ownership of fine-tuned weights depends on the base model licence, the copyright status of the training data, employment and contractor agreements within the team doing the training, and the unsettled legal question of whether AI-generated weight changes are protectable at all under current copyright law.

The Ownership Stack — What You Are Actually Claiming Rights Over

Three Unresolved Questions That Affect Fine-Tune Ownership Claims

Is a fine-tuned model a "derivative work" under copyright?

Copyright protection requires a human-authored creative contribution. Fine-tuning involves selecting a dataset and hyperparameters — but the weight modifications themselves are generated by an automated process. Whether that process produces a copyrightable derivative work depends on the level of human creative input, which varies by fine-tuning method and by jurisdiction. No major copyright authority has issued a definitive ruling on model-weight derivatives.

Do employment and contractor agreements capture the fine-tune?

Even where a fine-tune might be copyright-protectable, the ownership question shifts to whether your organisation — rather than the individual researchers who ran the training — holds the rights. This requires valid work-for-hire or IP assignment clauses in employment and contractor agreements that expressly cover AI model training outputs. Standard software IP assignment clauses may not be drafted broadly enough to cover model weights created using third-party licensed base models.

Does the base model licence constrain ownership claims?

For Llama 3 and Gemma derivatives, the licence restricts how you can characterise ownership of the fine-tune — you cannot represent your Llama 3 fine-tune as your unencumbered proprietary model because it must be distributed under the Llama 3 licence. For Apache-2.0 models, no such constraint exists: a Mistral fine-tune can be distributed as a proprietary model, and the ownership claim to the new weights is legally cleaner.

The problem

Copyright protects original human expression. Training a model does not require human expression in the weights — it requires human decisions about data selection, task framing, and evaluation criteria, but the weight values themselves emerge from a mathematical optimisation process. Several jurisdictions — including the US Copyright Office — have declined to protect AI-generated outputs without substantial human authorship. Whether fine-tuning constitutes sufficient human authorship to generate copyright in the resulting weights is an open question with significant commercial implications.

The practical implication

If fine-tuned weights are not copyright-protectable in a given jurisdiction, your primary protection for the model is contractual (licence terms and trade secrecy) rather than copyright. This matters for enforcement: trade secrets require active protection measures and are lost if disclosed without protection. A model distributed under a custom licence without copyright backing is protected only as long as the contract is enforceable and the weights remain non-public. Due diligence for investments or acquisitions involving proprietary fine-tuned models should include a legal assessment of the copyright position in the target's key jurisdictions.

Ownership Clarity by Base Model and Scenario

Section 4 — Risks When You Mix Models and Datasets Under Different Licences

Most production fine-tuning pipelines involve at least two licence sources: the base model and the training dataset. Many involve more — continued pre-training on a second model, RLHF using a reward model under a separate licence, or synthetic data generated by a proprietary model. Each licence combination creates a potential conflict that, if unaddressed, can contaminate the fine-tuned model's legal status for all downstream uses.

Unlike software dependency licence conflicts, which are well-documented and covered by standard OSS compliance tooling, model licence mixing has no established resolution framework and limited case law. The risks are real, the obligations are contractual (making breaches enforceable), and the worst-case outcomes — model withdrawal, injunction, or forced re-training — are commercially severe.

Four High-Risk Mixing Scenarios

Fine-tuning a Llama 3 model on a CC-BY-SA dataset

Copyleft dataset meets custom model licence — obligations collide

Creative Commons BY-SA (Share-Alike) is a copyleft licence: any work derived from a CC-BY-SA dataset must be distributed under the same or compatible licence. If you fine-tune Llama 3 on a CC-BY-SA corpus and the resulting model is considered a derivative of the dataset, you face two conflicting obligations: the Llama 3 licence requires distribution under Llama 3 terms only, while CC-BY-SA requires distribution under CC-BY-SA or compatible terms. These are not reconcilable.

The question of whether a model is a "derivative work" of its training data is unsettled, but the risk is live: several dataset providers have explicitly asserted that models trained on their CC-BY-SA data are derivatives. If that position is accepted in litigation or arbitration, a Llama 3 fine-tune trained on CC-BY-SA data would face a licence conflict with no clean resolution.

Continued pre-training on Gemma, then LoRA fine-tuning on Llama 3

Two custom model licences with conflicting downstream obligations

Some development pipelines use one model for continued pre-training (domain adaptation on large unlabelled corpora) and a different model as the base for task-specific fine-tuning. If the pre-training stage uses Gemma weights and the fine-tuning stage uses Llama 3 weights — or vice versa — the resulting model carries obligations from both licences simultaneously.

Gemma requires flow-down of the Prohibited Use Policy to all downstream recipients. Llama 3 requires distribution only under the Llama 3 Community License and prohibits relicensing under other terms. If both licences bind the merged model, satisfying one may make it impossible to satisfy the other without additional agreements with both Google and Meta. In practice, this scenario should be avoided by selecting a single base model licence track and staying within it throughout the pipeline.

Using GPT-4 / proprietary model outputs as synthetic training data

OpenAI and similar providers explicitly prohibit using outputs to train competing models

A widely used fine-tuning technique generates synthetic training data by prompting a large proprietary model (GPT-4, Claude, Gemini) and using the outputs to train a smaller open-weight model. OpenAI's Terms of Service explicitly prohibit using outputs from OpenAI models to develop AI models that compete with OpenAI's products. Similar restrictions appear in Anthropic's and Google's API terms.

This prohibition applies regardless of the base model being fine-tuned. If you generate synthetic instruction data using GPT-4 and use it to fine-tune Mistral, Llama 3, or Gemma, the fine-tuned model's training data creates a contractual obligation to OpenAI — even though the weights themselves derive from a different model. The contamination is in the dataset, not the base model licence.

Mixing Apache-2.0 and custom-licence model weights (model merging)

Model merging or ensemble techniques applied across licence types

Techniques like SLERP merging, linear weight interpolation, or model ensembling combine weights from two or more base models into a single merged model. If one source model is Apache-2.0 (Mistral) and the other is Llama 3 or Gemma, the merged model inherits the more restrictive licence obligations from the custom model — Apache-2.0 does not "cleanse" the merged weights.

Merged models published on Hugging Face using Llama 3 as one source must include the Llama 3 Community License. The merged model cannot be redistributed under Apache-2.0 alone, because the Llama 3 weights — even partially — are present in the merged output and remain bound by the Llama 3 licence. This is particularly relevant for the growing ecosystem of publicly shared merged models where licence chain compliance is frequently absent.

Dataset Licence Compatibility — Common Fine-Tuning Combinations

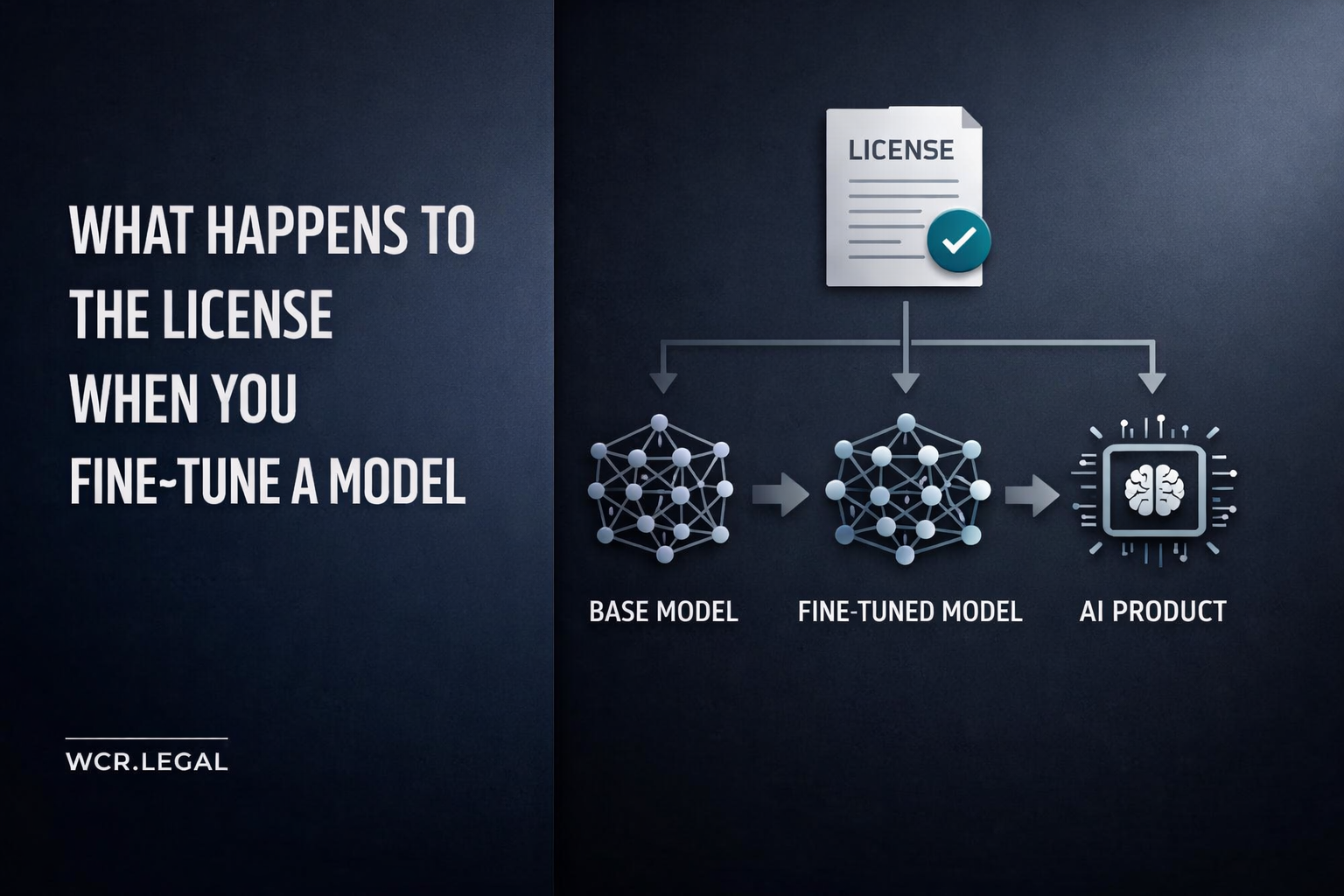

The Licence Follows the Weights — Through Every Stage of Training

Fine-tuning is a legal event as much as a technical one. The base model's licence attaches to the fine-tuned weights, the LoRA adapters, and every product that delivers those weights to users — whether as a download, an API, or an embedded feature. The licence does not reset, it does not weaken, and it does not transfer ownership of the base model's IP to the fine-tuner.

The key distinctions that govern compliance are clear in structure: internal use avoids distribution triggers but not use-case restrictions; distribution (including public release, API services, and M&A transfers) activates the full obligation stack; and model or dataset mixing always inherits the most restrictive licence in the pipeline. Where Mistral/Apache-2.0 performs comparably to Llama 3 or Gemma, choosing the Apache-2.0 model eliminates all of these risks at source.

For teams already working with Llama 3 or Gemma fine-tunes, the priority is documentation: provenance records, dataset licence audits, and contract updates (PUP flow-down, Llama 3 licence pass-through) to enterprise customer agreements. These are not just compliance items — they are the materials that will be reviewed in every fundraising round and acquisition conversation involving an AI product with a custom-licence model at its core.

For the broader framework on AI IP ownership and how model licence choice interacts with investment structuring, see AI IP Ownership — wcr.legal.